Continuous Intelligence - Integrating Intelligence in Real-Time?

The amount of data generated today is mind-boggling, with up to 90% classified as unstructured data. This presents a significant challenge for organisations aiming to process and gain insights from such data. The task becomes even more complex when trying to label data consistently and design models and programs that handle this vast volume effectively. Apache Flink Use Cases offers a solution through its real-time analytics capabilities, enabling organisations to process unstructured data efficiently and extract valuable insights without missing crucial information. By leveraging Flink's robust stream-processing framework, teams can stay focused on deriving actionable insights while managing the complexities of unstructured data effectively.

We, humans, are great at problem-solving and innovating, and the result is Artificial Intelligence and deep learning models that answer all the questions. Through this, the machine can learn every new addition without human intervention and, in the meantime, let the team direct new inquiries to the insights and reach a state where data flows in. Insights become the next iteration of a product or a new product altogether. Data involves risk, and putting humans there to process the data is not wise. It isn't easy when millions or billions of data come in, in many forms, structured and unstructured, texts, images, and videos, so there comes Continuous Intelligence or CI that allows us to analyze this data accurately in real-time.

What makes Continuous Intelligence different?

CI enables us to make smarter business decisions using real-time data streams and advanced analytics. Unlike traditional analysis, CI is always on for situational awareness and prescribed actions, allowing businesses to be proactive. Conventional or Traditional operational decisions are made by real-time calculations on historical data or already captured data at one moment.

Let’s understand the difference with an example. An app that calculates the distance users have walked in a month by using GPS location data. In this case, the traditional approach would be to make a one-time calculation on all the data transmitted from the user's device and stored over the past month. CI uses real-time data stream processing, which allows continuous analytics of updated data whenever the GPS is refreshed.

Hence, in this way, CI augments the conventional analytics approach by allowing continuous analysis to be modified occasionally. Another example that can help understand the concept of CI is machine maintenance. A traditional approach, in this case, would be either to wait for the machine to break down and then fix the problem or by, replacing parts on a predetermined schedule or conducting manual inspections. But CI enables us to take a predictive maintenance approach. Sensor-based monitoring could be used to identify the problem indicator, and a replacement of parts can be implemented just in time. The information from the sensors can also be used to analyse the machine's performance in real time.

CI is the way for systems to know what is happening around them in real-time, so they act accordingly, google maps in our case. Learn About the Different Use Cases of Continuous Intelligence

How does DataOps benefit from Continuous Intelligence?

The ecosystem that enables the continuous delivery of data, which makes Continuous Intelligence effective, is through DataOps. Real-time and deep data analytics allow organizations and businesses to be on a competitive edge. Because of these competitions, you can’t wait a few months, or to be more specific, you can’t even afford to wait for a few weeks to change your product based on your user’s response. The continuous innovations of the big tech giants or startups are looking to dethrone the ultimate tech space. Eventually, your customers will walk up if you don’t respond in time.

Today, Brand Loyalty is not a thing. Only a few companies have maintained brand loyalty, but even then, they do it with continuous innovations. All these innovations come from the accurate and frictionless insights that can be achieved from today's data. Continuous Intelligence has only one goal to fix problems faster and faster than all your legacy systems. It unifies analytics, monitoring, and generating insights transparently and in less time. So you’re deploying faster with open-to-feedback loops, and that is what boosts DataOps.

A well-orchestrated DataOps pipeline enables you to access your data quickly, with faster exploration and visualisation from data sources. It would also speed up the development, training, and testing of ML models, continuous operationalisation and deployment, and thus continuous intelligence.

Real-time data streaming and analytics mainly focuses on the data produced or consumed, or stored within a live environment. Click here to know about the Top Real Time Data Streaming Tools

Example of CI in action

-

Operational Monitoring: Real-time contextual data and AI/ML-powered analytics together offer many ways to optimise operations. Prediction of risks, monitoring performance, and response to business proactively by alerting and triggering actions in the business

-

Supply Chain Optimisation: The supply chain can deliver more value when management is based on current conditions. The combined latest sales, economical and seasonal data, logistics, and other supply-side dynamics can drive in-time decisions that move with the market.

-

Predictive Maintenance with IoT: IoT data and 5G technologies enable a powerful CI use case in manufacturing, utilities, and much more. Real-time and historical data with AI/ML processing can predict and trigger proactive maintenance, leading to peak performance and continuity in business.

-

Value-based Healthcare: Combining personal health and medical conditions data could boost a CI application that can instantly process risk factors against a patient’s medical history and conditions, personalizing complex diagnostics and giving value-based actions like early intervention and advice.

-

Fraud Detection and Mitigation: The increase in deceitful or fraudulent cases within the finance sector needs a CI-driven approach. Monitoring the transactions to spot anomalies and alert the concerned personnel, or maybe blocking transactions in some cases, is one of the main actions in which continuous Intelligence is making a big impact in the finance industry.

-

Emergency Planning and Logistics: By definition, emergencies are real-time, dynamic events. Government and private sector organizations can use current weather and disaster information to predict and manage personnel, equipment, and processes as emergencies evolve.

Infuse AI and machine learning into your business processes, and Embed sustainable practices into the core of your business strategy and operations by employing Intelligent Enterprise Platform Solutions Framework

Continuous Intelligence with Real-Time Analytics Models and Architectures

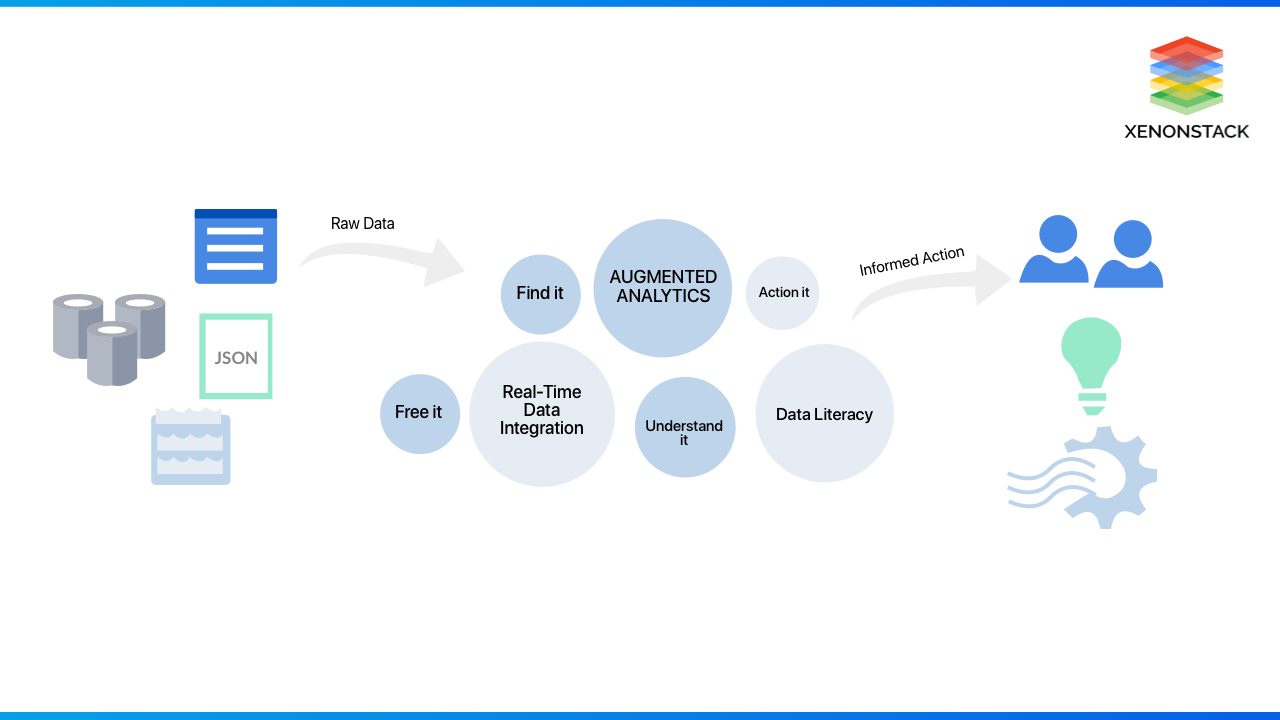

The right architecture and platform will simplify and unify the way businesses collect, organize, and analyze data, boosting the value of real-time analytics and AI. Data is not a single-use event. It's directly integrated with your business decisions. Capable organisations will refine the data continuously, gaining new insights and swiftly changing business decisions accordingly.

Gartner defined six features of CI:

- Fast: Real-time insight that keeps pace with today’s modern changes and needs

- Smart: The platform is capable of processing any available type of data

- Automated: Human intervention will incur mistakes and hence will waste your team’s time.

- Continuous: Real-time analytics requires a system that can work around the clock

- Embedded: It’s integral to your current system.

- Focused Results: Data means nothing without insights, and your program should deliver that. One should not forget the results in the search for data.

Additionally, what’s needed is a hyper-converged architecture that can combine storage, computing, and networking software into a single unified system. This can simplify how a business manages, governs, and analyzes data. The right solution allows organizations to provision and deploys data services more efficiently and rapidly. Hyper-converged architecture makes it possible to scale and evolve infrastructures economically as loads change in applications.

Key Elements to Make Continuous Intelligence Strategy Successful

Several factors make CI more accessible and successful. They include:

Hardware

Today, businesses have access to high-performance computing capabilities that are needed for CI. Compared to supercomputers a few years ago, the price of CPUs, GPUs, storage, and high-performance memory continues to drop. Organizations also can use all these instances in an interconnected platform from the major cloud service providers. Starting with CI does not require a huge upfront investment with this approach, and cloud services also allow businesses to scale up efforts quickly over time.

Analysis, AI & ML Software

The second factor that is assisting CI in becoming successful and coming to mainstream is the availability of new analytical algorithms to make sense of streaming data. Today, businesses have easy access to ML, AI, and stream analytics software which are also relatively easy to use.

Cloud and Middleware

The use of cloud-native applications, microservice architectures, and hybrid cloud is growing rapidly because it allows businesses to develop, deploy, and run CI across the enterprise. Middleware today enables moving, hosting, and accessing CI applications, taking performance and cost factors into account.

Continuous Intelligence in DevOps helps small and large-level enterprises analyze data in real-time from previous experiences and use it to optimize incident response time and facilitate faster delivery. Source, DevOps with Continuous Intelligence

Implementing Continuous Intelligence with Real-Time Analytics

Data streams on which organisations should make use of CI can originate from multiple sources:

- Iot devices and sensors

- Audio, Video, Text files, images, spreadsheets

- Instant messaging, chat, email

- Social networking sites, blogs, and Web traffic

- Customer records, Financial transactions, phone usage records, application logs

- GPS data, Satellite data, smart devices,

And with the right platform and architecture, Continuous Intelligence will be able to give solutions like:

- Analyze data across multiple sources continuously to deliver productive insights

- Any data stream can be connected to make real-time predictions to enhance analytic models and cognitive systems

- Streaming analytics, such as Natural language processing, geospatial, and predictive, can be deployed as a complete set to satisfy use cases and company requirements.

- With open-source technologies using APIS and data, less time and more value can be visualised easily with drag-and-drop development tools that help faster deployment and effective production management.

Continuous Intelligence enables businesses to make decisions while events are happening. It brings meaning to fast-moving real-time data streams and helps organisations in a wide variety of applications. Solutions to support CI should include efficient and appropriate hardware, real-time analytics, and AI software. The architecture to support CI should be capable of storing, managing, and keeping historical and streaming data safe. And the solution must be deployable in the cloud or on-premise and can be easily moved to the platforms that offer the best performance and cost.

Next Steps with Continuous Intelligence

Talk to our experts about implementing compound AI system, How Industries and different departments use Agentic Workflows and Decision Intelligence to Become Decision Centric. Utilizes AI to automate and optimize IT support and operations, improving efficiency and responsiveness.

.webp?width=1921&height=622&name=usecase-banner%20(1).webp)