Natural Language Processing (NLP) is a cornerstone of enterprise innovation, allowing systems to understand, interpret, and generate human language with precision. Traditional NLP models provide value but remain limited in adaptability and decision-making. With the integration of Agentic AI and Large Language Models (LLMs), enterprises can transform static workflows into dynamic, autonomous ecosystems where intelligent agents process language and act on insights in real time. This creates a foundation for more scalable, context-aware, and outcome-driven applications.

By leveraging Agentic AI for NLP, organisations can automate advanced use cases such as conversational intelligence, knowledge retrieval, multilingual support, and document summarisation. Platforms like Akira AI and XenonStack enable agentic orchestration, combining LLMS' generative capabilities with decision intelligence to deliver faster resolutions, improved compliance, and more accurate context handling. These adaptive systems go beyond text generation to provide decision-centric automation that enhances customer engagement, operational efficiency, and enterprise-wide productivity.

The convergence of Agentic AI, Generative AI, and LLMs empowers industries to extract greater value from unstructured data and build resilient workflows. Intelligent agents collaborate, validate, and optimise language-driven tasks, ensuring accuracy, scalability, and trust. Enterprises adopting this approach unlock competitive advantage by modernising NLP pipelines and achieving measurable business impact. With Agentic AI for NLP, organisations can redefine how language models are applied across industries—shaping the future of intelligent automation and decision-making at scale.

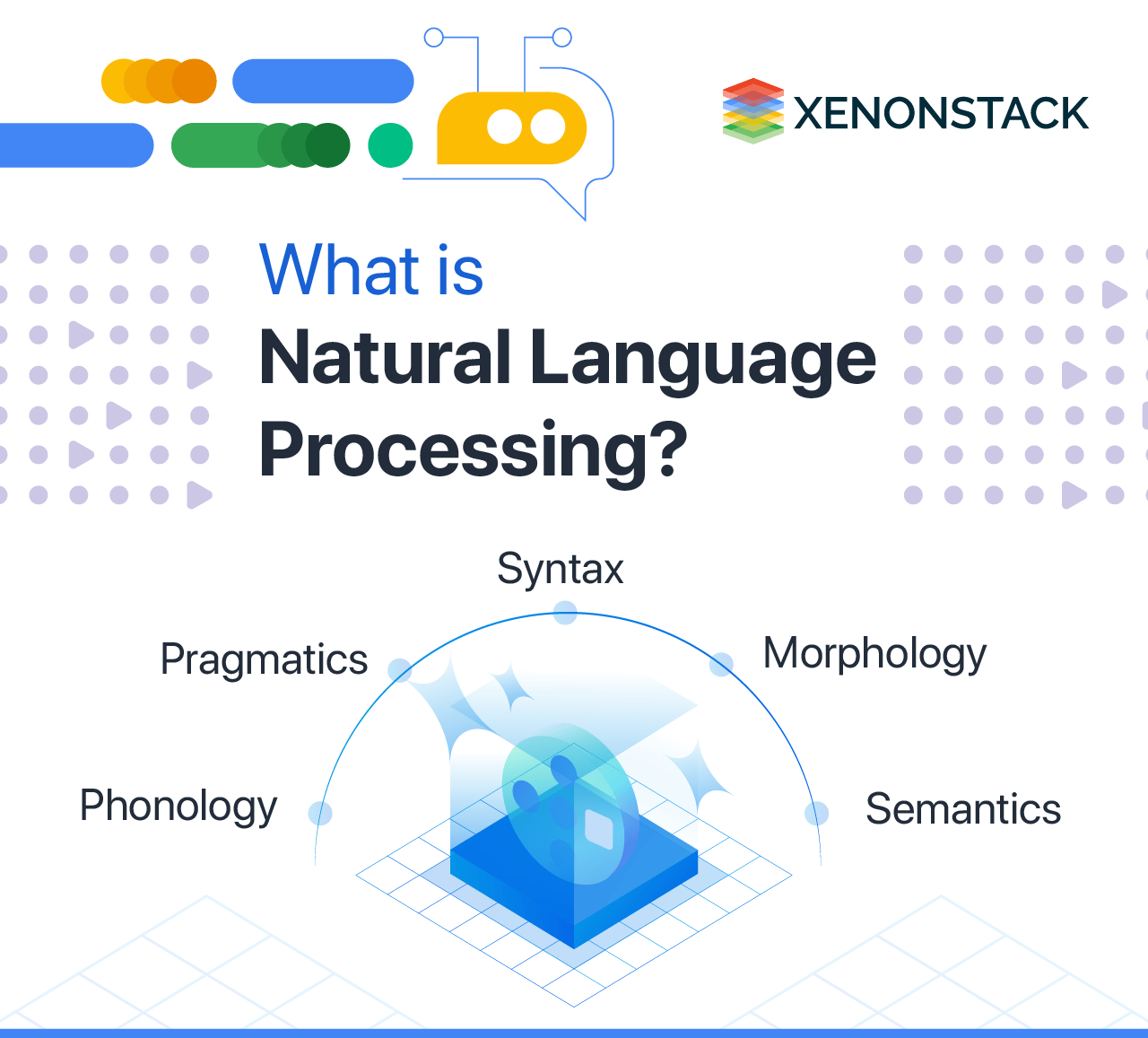

What is Natural Language Processing?

Natural Language Processing (NLP) is the discipline that enables machines to understand, interpret, and generate human language. By combining linguistics, machine learning, and deep learning models, NLP powers applications ranging from chatbots and virtual assistants to document summarisation and automated translation.

With Agentic AI, NLP capabilities evolve into adaptive and autonomous workflows where agents not only process text but also act on it to deliver intelligent outcomes.

Key Components of NLP

| Component | Role in NLP | Agentic AI Enhancement |

|---|---|---|

| Syntax | Understanding sentence structure | Agents refine grammar-aware parsing for contextual decision-making |

| Semantics | Meaning interpretation | LLM-powered agents capture nuanced meanings |

| Pragmatics | Contextual understanding | Agents adapt interpretations based on the environment |

| Morphology | Word structure | Agents enhance tokenisation and lemmatisation |

| Phonology | Sound-to-text mapping | Agents improve speech-to-text accuracy with LLMs |

Why Agentic AI Matters for NLP

Traditional NLP models are limited to predefined tasks and lack adaptability. Agentic AI changes this by enabling autonomous language agents that collaborate, validate, and orchestrate workflows. When combined with Large Language Models (LLMs), enterprises achieve more than generative outputs—they create decision-centric automation.

Benefits include:

-

Real-time contextual analysis

-

Multilingual processing at scale

-

Automated knowledge retrieval

-

Enhanced compliance and governance

-

Seamless integration with enterprise systems

Applications of NLP with Agentic AI and LLMs

Agentic AI enhances NLP by bridging language understanding with action. Here are the key applications:

-

Healthcare: Automated patient record summarisation, clinical trial data analysis, and AI-driven diagnosis with synthetic patient data.

-

Sentiment Analysis: Real-time monitoring of brand perception across digital channels with adaptive insights.

-

Cognitive Analytics: Intelligent document processing and contextual search for faster decision-making.

-

Spam Detection: Proactive filtering with self-learning agents to enhance cybersecurity.

-

Recruitment: Automated resume parsing, candidate ranking, and communication workflows.

-

Conversational Frameworks: Virtual assistants, chatbots, and voice AI that adapt dynamically to user intent.

Role of Large Language Models in NLP Workflows

LLMs such as GPT, LLaMA, and Claude act as the backbone of modern NLP. Integrated with Agentic AI, they extend beyond text generation:

-

Context-Aware Conversations: Agents fine-tune LLM outputs for enterprise-specific needs.

-

Knowledge Enrichment: Multi-source data integration with adaptive validation.

-

Decision-Centric Automation: Language insights orchestrated into business actions.

-

Multilingual Intelligence: Support for global operations with high translation accuracy.

| Capability | LLM Role | Agentic AI Orchestration |

|---|---|---|

| Text Generation | Produces human-like text | Agents validate and align outputs with context |

| Summarization | Extracts key insights | Agents prioritise domain-specific relevance |

| Q&A Systems | Answers queries | Agents connect outputs to enterprise databases |

| Classification | Organises language data | Agents adapt categories dynamically |

Industry Use Cases of Agentic AI for NLP

Banking and Financial Services

In banking and financial services, Agentic AI with NLP strengthens fraud detection by analysing real-time transactional text data. It also streamlines compliance reporting by automating documentation and regulatory checks, reducing manual effort and errors. Additionally, AI-powered customer query handling enhances support efficiency, enabling faster and more accurate responses across digital channels.

Retail and E-Commerce

For retail and e-commerce, Agentic AI enables personalised product recommendations by analysing browsing behaviour, purchase history, and customer intent. Automated review summarisation helps brands extract actionable insights from customer feedback, while intelligent search and discovery engines make product navigation seamless, improving user experience and boosting conversions.

Healthcare and Life Sciences

In healthcare and life sciences, Agentic AI-driven NLP simplifies clinical data summarisation, ensuring that critical patient information is accessible to practitioners quickly. Real-time patient support chatbots provide accurate, context-aware assistance, while text mining accelerates drug discovery by extracting insights from vast volumes of medical literature and research data.

Telecom and IT Services

Telecom and IT service providers leverage Agentic AI for intelligent ticket resolution, enabling faster response times and reduced operational costs. Voice AI solutions enhance customer support by offering natural, conversational interactions. At the same time, predictive network performance monitoring through log analysis ensures proactive issue detection and seamless service delivery.

Advantages of Agentic AI for NLP

-

Scalability: Agents dynamically manage increasing volumes of unstructured text.

-

Accuracy: LLM-powered agents reduce errors in summarisation, translation, and classification.

-

Contextual Intelligence: Better semantic understanding compared to static models.

-

Autonomy: Agents collaborate and make decisions without manual intervention.

-

Enterprise Integration: Compatible with tools like Akira AI and XenonStack NLP pipelines.

Challenges and Risk Considerations

While powerful, enterprises must address:

-

Data Privacy: Ensuring sensitive information in NLP pipelines is protected.

-

Bias Mitigation: Agents must validate LLM outputs to prevent discriminatory results.

-

Regulatory Compliance: Aligning NLP workflows with GDPR, HIPAA, and domain-specific standards.

-

Cost Optimisation: Managing compute-intensive workloads across multi-cloud setups.

Future of NLP with Agentic AI

The convergence of Agentic AI, Generative AI, and LLMs signals a future where NLP agents evolve into decision-centric collaborators. Instead of being limited to text processing, these agents will integrate deeply with enterprise ecosystems, driving automation across industries.

Conclusion: Agentic AI in NLP

Enterprises that adopt Agentic AI for Natural Language Processing gain the advantage of adaptive, decision-ready workflows powered by LLMs. Organizations can transform unstructured language data into actionable insights by combining automation, intelligence, and scalability. Platforms like Akira AI and XenonStack empower businesses to implement secure, scalable, and trusted NLP pipelines.

Next Steps with Agentic AI for NLP

Talk to our experts about implementing Agentic AI for Natural Language Processing with LLMs to optimize workflows, enhance automation, and improve experiences. Unlock decision intelligence to drive measurable business impact across industries.

.webp?width=1921&height=622&name=usecase-banner%20(1).webp)