What is OpenFaas?

OpenFaas (Function as a Service) is a framework for building serverless functions on the top of containers (with docker and kubernetes). With the help of OpenFaas, it is easy to turn anything into a serverless function that runs on Linux or windows through Docker or Kubernetes. It provides a built-in functionality such as self-healing infrastructure, auto-scaling, and the ability to control every aspect of the cluster. Basically, OpenFaas is a concept of decomposing our applications into a small unit of work.What is Serverless?

Now here the question arises that what is serverless? What do we mean by serverless? So when we look at serverless, we are talking about a new architectural pattern (a way of building systems).

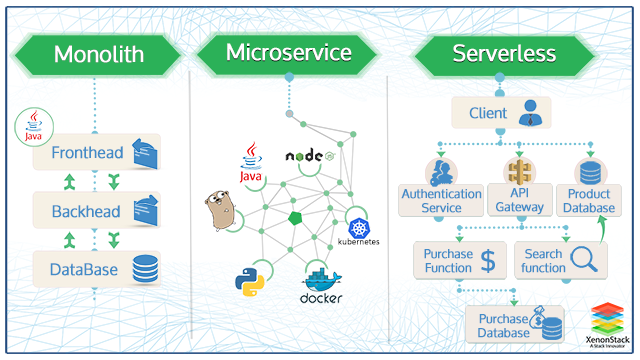

Serverless Monolith

- At the very start, we used to build monoliths, three-tier applications.

- They were very heavyweight.

- They are very slow to deploy.

- Had trouble testing them.

Serverless Microservices

- After monolith, we broke these down into microservices.

- They focused on being compostable.

- We deployed them with Docker containers.

Serverless Functions

- Is the next step in evolution.

- Functions are small, discrete, reusable pieces of code that we can deploy.

- Is not stateful.

- Makes use of our existing services or third-party resources.

- Executes in few seconds. It based upon AWS Lambda’s default.

The microservices architecture is an approach for developing services running in its process and enables continuous delivery/deployment applications. Source: Microservices Architecture and Design

Serverless Vs Microservices

As we already discussed above microservices and functions. But if we see more in deep, serverless makes it much easier to deploy our applications without having to worry about coordinating multiple parts of what our cloud providers offer. If we are talking about serverless, it runs functions instead of servers and our cloud provider manages how they run and on the other hand, if we are talking about microservices, it is a development philosophy where applications are built through self-contained components. Microservices-focused architecture can be Deployed on a serverless platform, a VM-based platform, and a container-based platform. This is a way to design applications and split them up. Serverless is even more granular than microservices and also provides a much higher degree of functionality.Use Cases of Serverless

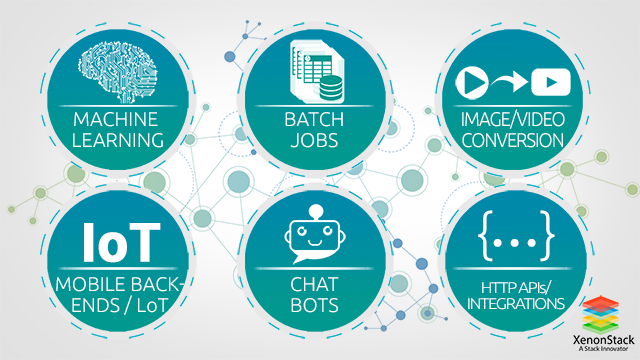

Machine Learning (We can package it as a function and call it as we need it.)- Batch Jobs

- Image/Video Conversion

- Mobile backends/IoT

- Chat Bots

- HTTP APIs/integrations

Stack

Cloud is the Native Stack. Through a Watchdog component and a Docker Container, any process can become a serverless function. Serverless doesn’t mean that we have to re-write the code in another programing language. We can run code in any language in which we want and wherever we want.API Gateway

The Api Gateway is where we define all of our functions. They each get a public route, and it can be accessed from there. Api gateway also helps in scaling the functions according to the demand by changing the service replica count in the Docker Swarm or Kubernetes API.Function Watchdog

The Function Watchdog embedded in every container and allows any container to become serverless. It acts as an entry point that enables HTTP requests to forward to the target process via Standard Input (STDIN) and on the other hand response is sent back to the caller by writing to Standard Output (STDOUT) from our application.Prometheus

Prometheus underpins the whole stack and collects statistics. Now from those statistics, we can build dashboards, and when a specific function had a lot of traffics, it scales up automatically using the docker swarm.What are the features of Faas-CLI?

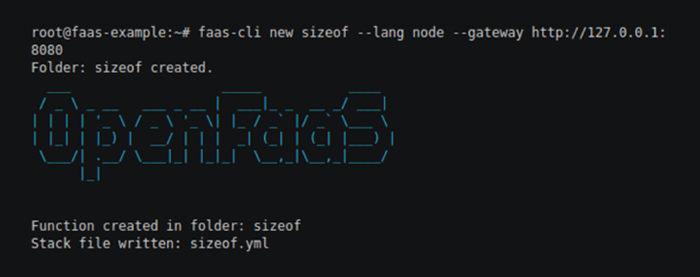

To build and deploy functions to OpenFaas, CLI can be used. It is used for deploying functions easier and more scriptable. Our only work is to build the Openfaas functions from a set of language templates such as CSharp, Node.js, Python, Ruby, Go (Golang), and many more, we have to write only a handler file, and the CLI automatically creates a Docker image. For the API gateway, CLI is a RESTful client.The main commands supported by the CLI:

- Fass-cli new :- this will create a new function in the current directory.

- Fass-cli build :- this command will build docker images.

- The Fass-cli push :- this command will push docker images into a registry.

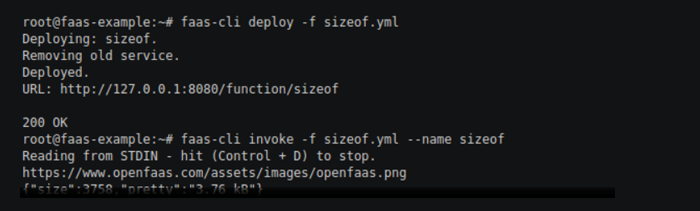

- Fass-cli deploy :- this command will deploy the functions into a local or remote OpenFaas gateway.

- Fass-cli remove :- this command will remove the functions from a local or remote OpenFaas gateway.

- The Fass-cli invoke :- this command will invoke the functions and read from standard input for the body of the request.

- Fass-cli login :- this command will store basic auth credentials for OpenFass gateway.

- The Fass-cli logout :- this command will remove basic auth credentials for a given gateway.

- Fass-cli store :- this command will allow browsing and deploying OpenFaas store functions.

- Use cli to build a template:-

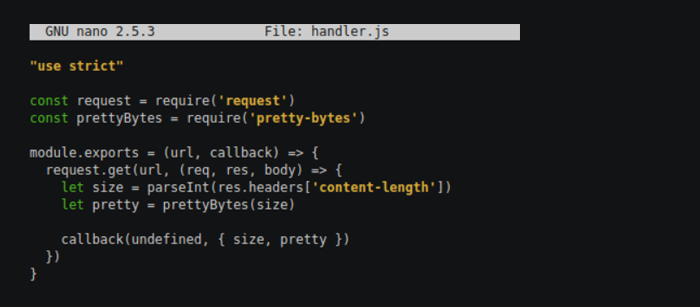

- Write the function code:-

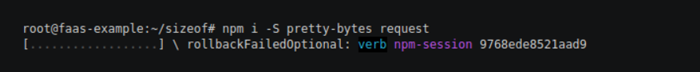

- Now install NPM deps:-

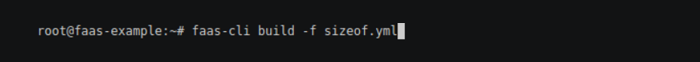

- Now build it:-

- Deploy:-

Kubernetes Support

OpenFaas also supports Kubernetes. It is Kubernetes-native and uses deployments and services. We can quickly deploy kubernetes easily for kubernetes.Asynchronous Processing

OpenFaas also supports Asynchronous Processing. Asynchronous Processing supports longer timeouts, can process work whenever resources are available instead of handling immediately. Within a few seconds, it can consume a large batch of work and process it.Async Working

Below is the conceptual diagram:- With a callback URL, we can also use asynchronous calls.OpenFaas with Java

Deploying OpenFaas and setup with Watchdog- Clone Faas:- git clone < a href="https://github.com/openfaas/faas" target="_blank_"

- Initialize Swarm mode on your Docker daemon:- sudo docker swarm init

- Now deploy Faas Stack:- ./deploy_stack.sh

- Install CLI:- curl -sSL | sudo sh

- Clone Watchdog:- git clone

- Now Build it:- ./build.sh

- Now Copy ‘of-watchdog’ to where the main JAR file exists.

How to Develop and Deploy Microservices based Java Application on the Docker and Kubernetes and adopt DevOps in existing Java Application. Source: Java Microservices Application Deployment

What is Java Code?

Java code will read the data from the file. Main Classimport java.io.*;

import com.openfaas.*;

public class Main {

public static void main(String[] args) throws Exception {

DataInputStream dataStream = new DataInputStream(System.in);

BufferedWriter out = new BufferedWriter(new OutputStreamWriter(System.out));

HeaderReader headerReader = new HeaderReader(dataStream);

Parser parser = new Parser();

while (true) {

parser.acceptIncoming(dataStream, out, headerReader);

}

}

}

Parser.java

import java.io.*;

public class Parser {

public void acceptIncoming(DataInputStream dataStream, BufferedWriter out,

HeaderReader parser) throws IOException {

StringBuffer rawHeader = parser.readHeader();

System.err.println(rawHeader);

HttpHeader header = new HttpHeader(rawHeader.toString());

if(header.getMethod() != null) {

System.err.println(header.getMethod() + " method");

System.err.println(header.getContentLength() + " bytes");

byte[] body = header.readBody(dataStream);

function.Handler handler = new function.Handler();

String functionResponse = handler.function(new String(body), header.getMethod());

HttpResponse response = new HttpResponse();

response.setStatus(200);

response.setContentType("text/plain");

StringBuffer outBuffer = response.serialize(functionResponse);

out.write(outBuffer.toString(), 0, outBuffer.length());

out.flush();

}

}

}

Handler.java

package function;

public class Handler {

public String function(String input, String method) {

return "Hi from your Java function. You said: " + input;

}

}

- HeaderReader.java

- HttpHeader.java

- HttpResponse.java

Execution of the Java Program

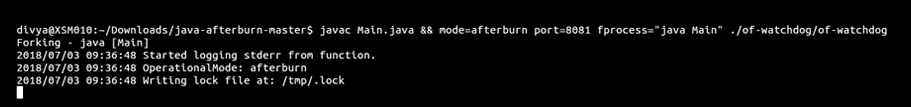

Now Execute the java program by using the below command:-- javac Main.java && mode=afterburn port=8081 fprocess="java Main" ./of-watchdog/of-watchdog.

Now open new terminal window and go in your java code directory and execute the below command:-

Now open new terminal window and go in your java code directory and execute the below command:-

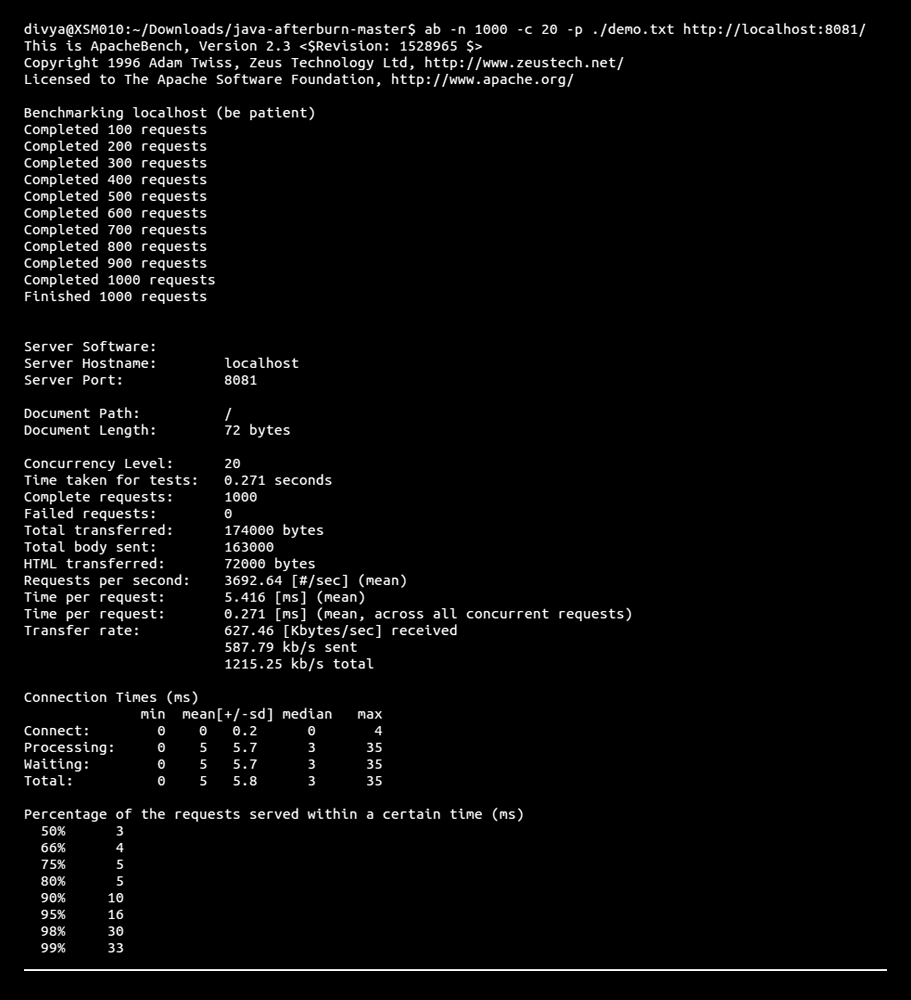

- ab -n 1000 -c 20 -p ./demo.txt http://localhost:8081/ This command will do the benchmarking.

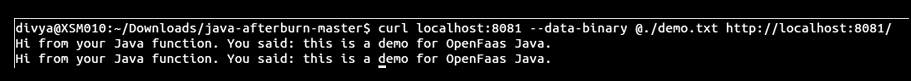

Now execute your function using curl:-

Now execute your function using curl:-

- Here we pass the file from where our java function will read the data from .txt file

- curl localhost:8081 --data-binary @./demo.txt http://localhost:8081/

Conclusion

OpenFaas is based on serverless, and the main benefits of serverless are that we do not have to manage the application infrastructure and on the other hand developers can concentrate on delivering business value. Serverless is perfect for IoT devices, microservice architecture, or any other type of application that needs to be efficient.

- Discover more about Serverless Solutions and Architecture for Big Data

- Explore about Java Serverless Microservices with Docker

- Click to know about Amazon Athena Architecture

.webp?width=1921&height=622&name=usecase-banner%20(1).webp)