Artificial Intelligence Overview

AI refers to ‘Artificial Intelligence’ which means making machines capable of performing quick tasks like human beings. In simple words, artificial intelligence (AI) is the ability of machines to perform tasks that usually require human intelligence. AI has two key components- Automation

- Intelligence

The Evolution of Artificial Intelligence

1. Machine Learning

It is a set of algorithms used by intelligent systems to learn from experience.2. Machine Intelligence

These are the advanced rounds of algorithms used by machines to learn from experience. E.g. - Deep Neural Networks. Artificial intelligence technology is currently at this stage.3. Machine Consciousness

It is self-learning from experience without the need for external data.

3 Types of Artificial Intelligence

1. Artificial Narrow Intelligence (ANI)

It comprises of or role tasks such as those performed by chatbots and personal assistants like SIRI by Apple and Alexa by Amazon.2. Artificial General Intelligence (AGI)

Artificial general intelligence comprises human-level tasks such as those performed by self-driving cars by Uber, and Autopilot by Tesla. It involves continual learning by the machines and using AI in software testing.3. Artificial Super Intelligence (ASI)

Artificial superintelligence refers to intelligence that is way smarter than that of humans.

Top 8 AI Applications to Look Out for in 2023

1. Natural Language Processing (NLP)

AI powers language-based applications like chatbots, language translation, sentiment analysis, and text summarization.

Deep dive into the Natural Language Processing NLP Applications and Techniques

2. Autonomous Vehicles

AI is pivotal in developing self-driving cars and autonomous vehicles by enabling object recognition, decision-making, and navigation.

3. E-commerce and Recommendation Systems

AI enhances the user experience in online shopping by providing personalized recommendations, improving search results, and optimizing product recommendations.

Explore more about Next Generation Recommender Systems

4. Robotics and Automation

AI-driven robotics and automation streamline manufacturing processes, logistics, inventory management, and repetitive tasks in various industries.

5. Image and Speech Recognition

AI applications include facial recognition, object detection, speech-to-text, and text-to-speech technologies.

6. Predictive Analytics

AI-driven predictive models help in forecasting trends, customer behaviour, market insights, and business strategies across industries.

Explore more about Predictive Analytics Tools and its Benefits

7. Cybersecurity

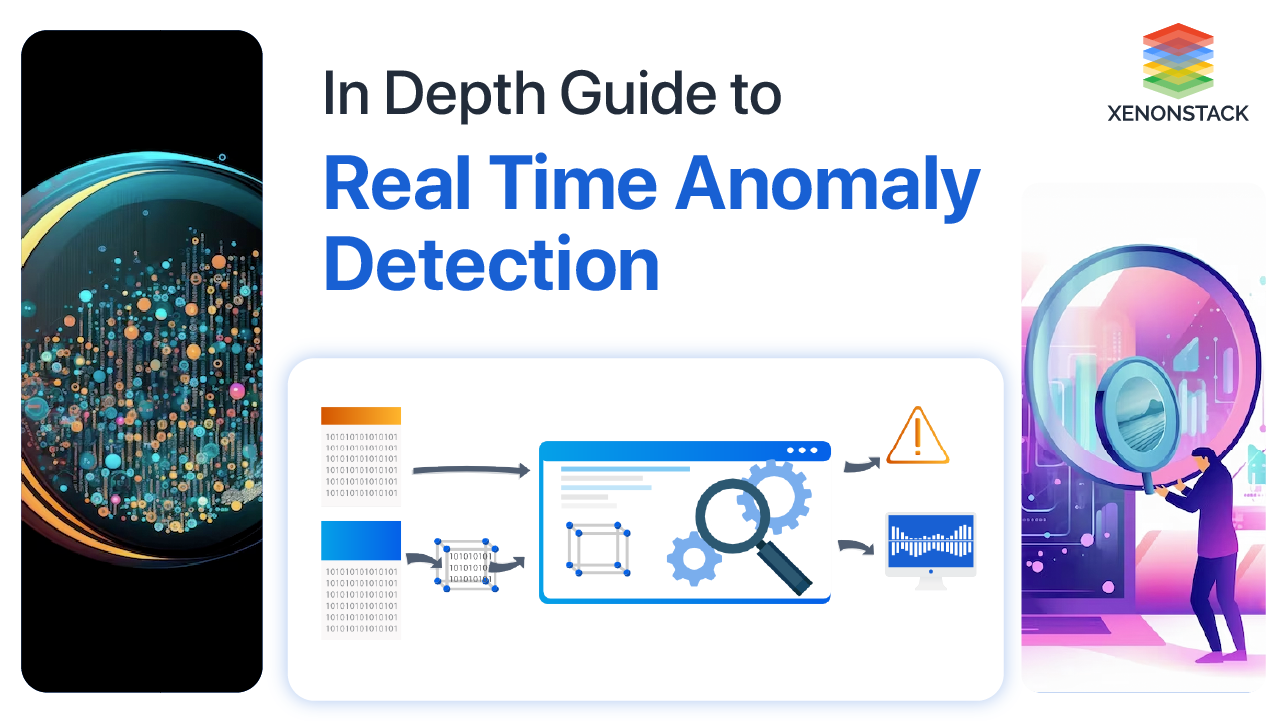

AI enhances security measures by detecting anomalies, identifying threats, and preventing cyberattacks in real-time.

8. Smart Assistants and IoT

AI powers smart home devices, virtual assistants like Siri and Alexa, and interconnected IoT systems for seamless automation and control.

Leverage the IoT Application Development Services and Solution

Various Sectors Harnessing the Power of Artificial Intelligence

Artificial intelligence (AI) spans numerous industries with diverse applications. Some prevalent uses of AI include

1. Healthcare

Utilizing AI for disease diagnosis, cancer cell identification, chronic condition analysis, drug discovery, and personalized medical treatments

Know more about AI solutions in Healthcare

2. E-commerce

Employing AI for tailored shopping experiences, recommendation systems, marketing automation, and customer service using chatbots.

Know more about Ecommerce Analytics Platform and Solutions

3. Robotics

Implementing AI for real-time obstacle detection, path planning, transportation of goods, cleaning tasks, and inventory management in sectors like healthcare, manufacturing, and warehouses

Learn further details about the Robotic Process Automation Services and Solutions

4. Finance

Applying AI for credit scoring, fraud detection, risk assessment, and algorithmic trading.

5. Manufacturing

Using AI for quality assurance, predictive maintenance, optimizing supply chains, and enhancing productivity and precision in processes involving robotics

6. Entertainment

Enhancing interaction with art and music through generative art, interactive installations, and virtual concerts

7. Human Resources

Employing AI for resume screening, ranking candidates, and automating repetitive communication tasks via chatbots.

8. Agriculture

Utilizing AI to identify soil defects, nutrient deficiencies, weed detection, and crop harvesting.

9. Gaming

Applying AI to create intelligent game characters, optimize game strategies, and enrich user experience.

10. Law and Legal Services

Utilizing AI for legal research, contract analysis, and predicting case outcomes

11. Transportation

Employing AI for autonomous vehicles, route optimization, and predictive maintenance within the transportation industry

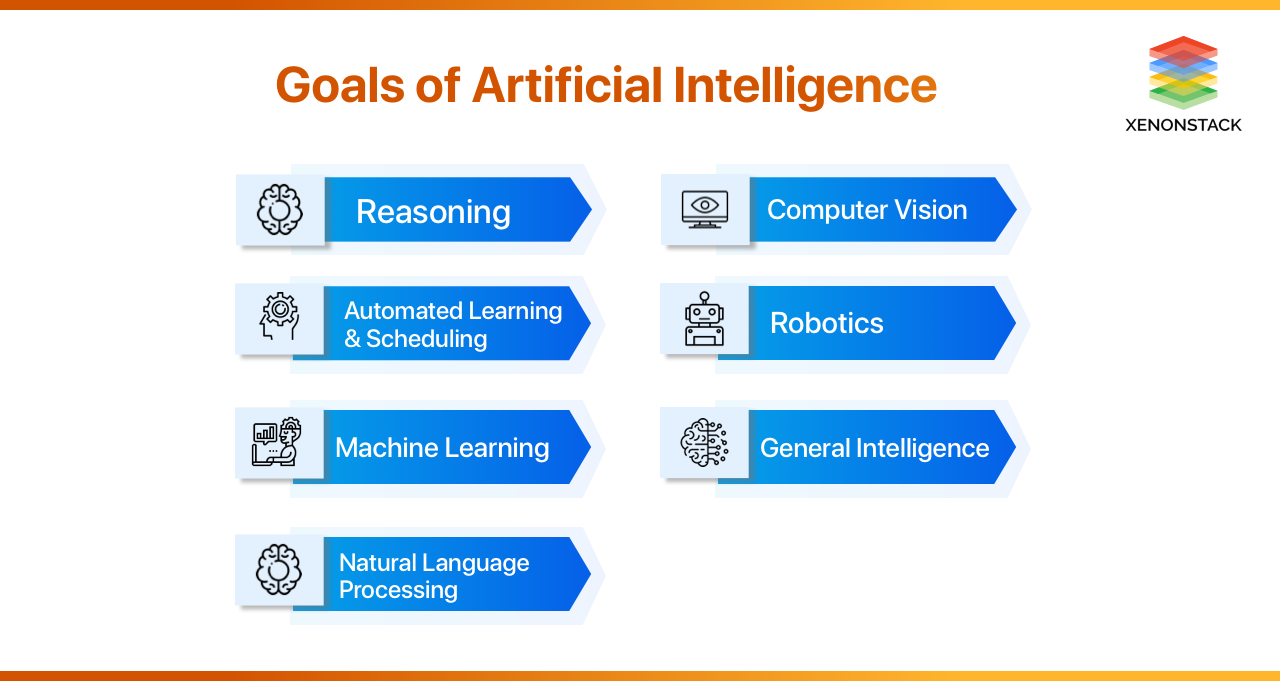

Objectives of Artificial Intelligence

1. Automate Workloads

Artificial intelligence collects and analyzes data using smart sensors or machine learning algorithms and automatically routes service requests to reduce the human workload. Artificial Intelligence simplifies IT Operations as well.

2. Data Management

AI applications help to manage and analyze large databases simply. Moreover, displays a meaningful view of assets, businesses, staff or clients.

3. Improve Customer Service

With the use of a virtual assistant, businesses can provide real-time support and interactions to their clients.

4. Increase Revenue

All the applications using Artificial Intelligence in DevOps helps business identify upcoming risks and maximize sales opportunities.

A Comprehensive Approach to Artificial Intelligence

A holistic strategy for AI demands active cooperation and discussion among various stakeholders, such as policymakers, industry pioneers, researchers, ethicists, and the wider public. This collaboration is essential to guaranteeing the responsible development and ethical utilization of AI technologies for the betterment of society

- Read more about the Top 4 Real-Life Ethical Issues in Artificial Intelligence | 2023

- Explore more about Artificial Intelligence (AI) In Data-Driven Enterprise