What is the Envoy Proxy?

Envoy Proxy is a modern, high performant, edge proxy, which works at both L4 and L7 proxies but most suitable for modern Cloud-Native applications which need proxy layer at L7. Envoy is most comparable to software load balancers such as NGINX and HAProxy, but it has many advantages than typical proxies. It allows SSL by default, it is really flexible around discovery and load balancing the workload.

Why Envoy Proxy Matters?

When there are many traditional proxies then why need a modern Edge proxy such as envoy? NGINX, HAProxy, and Envoy are all battle-tested L4 and L7 proxies, but Envoy has the following additional benefits -- It’s developed by keeping modern Microservices in mind.

- It translates between HTTP-2 and HTTP-1.1.

- It proxies any TCP protocol.

- It proxies any raw data, web sockets, databases, etc.

- It enables SSL by default.

- It has in-built Service Discovery as well as Load Balancing.

- It does all the configuration dynamically, all the hosts added dynamically, not like writing the list to a static config file & re-reading it.

- Envoy stores the mapping of requests from clients (i.e., URLs) to services and the in-built load balancer reads dynamically.

An open-source, lightweight Service Mesh developed mainly for Kubernetes. Click to explore about, Linkerd Benefits and its Best Practices

How does Envoy Work?

Configuring Envoy is a little complex task, but with the right approach, it can be done. You can also explore hyperapp working architecture in this insight. Envoy keeps simple things very simple while allowing complex things to be possible to implement.Network Stack

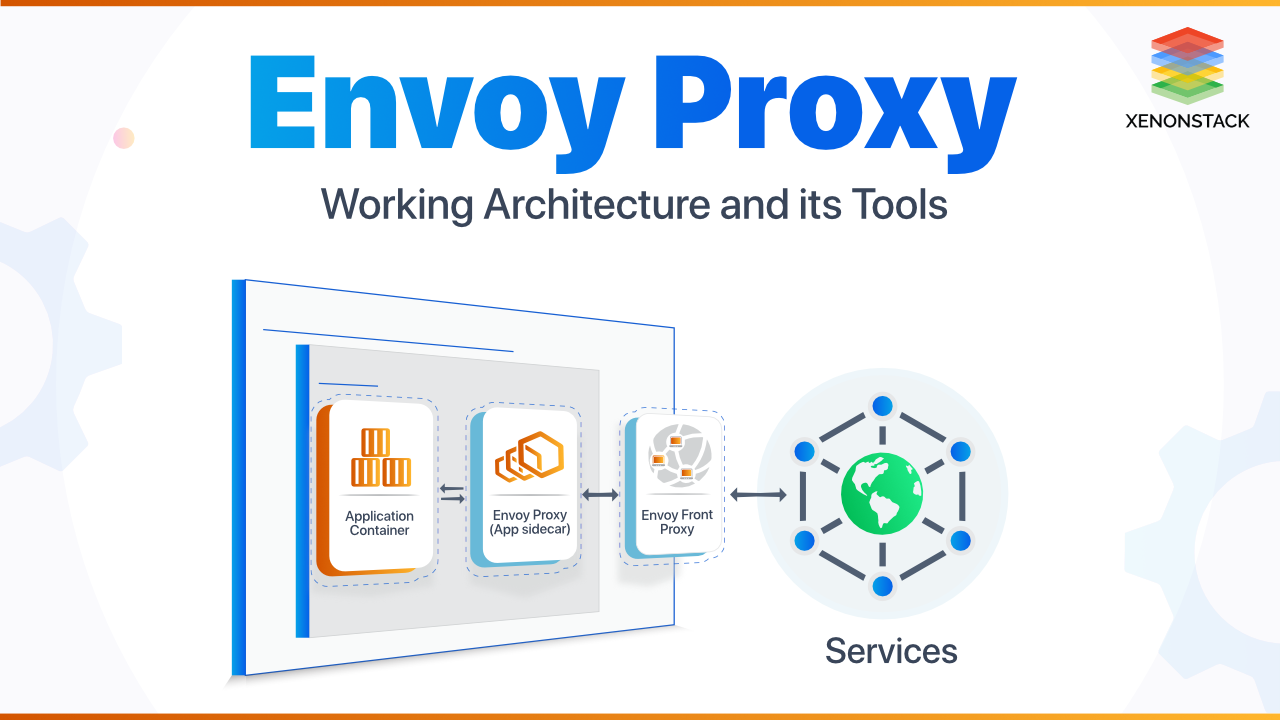

Envoy works at the TCP level: Layer 3/4 Network/Transport proxy. It just read/write bits, and uses IP addresses and port numbers to make a decision about where to route the request. Working at the TCP level is drastically fast and simple. Envoy also works at L7 as well simultaneously when it has to proxy different URLs to different backends since it needs application information available only at L7. Working at 3, 4, and 7 layers allow it to cover up all limitations and have good performance.2 layers of Envoy

Edge Envoy: The standalone instance is a single point of ingress to the cluster. All requests from outside first come here & it sends them to internal Envoys.

Sidecar Every: This instance (replica) of an app has a sidecar Envoy, which runs in parallel with the app. These Envoys monitors everything about the application.

All these proxies are inside a mesh, which has internal routing with each, side Envoys does health monitoring and let the mesh know whether a service is down or not. All Envoys also gather stats from the application and sends it to a telemetry component (Mixer in Istio). All of the Envoys in Mesh configured differently by modifying Envoy configuration file, according to a particular use case.

An open source tool written in Go which helps in creating an abstraction layer above various Microservices running in Kubernetes. Click to explore about, Istio Service Mesh Architecture

What are the benefits of Envoy Proxy?

- Wickedly fast than old gen proxies.

- Wickedly scales horizontally which is needed for modern apps.

- Proxies added/removed dynamically.

- Allows filtering the request based on many parameters.

- For Edge Envoys, any number of servers(each of which points to its own array of hosts) and any number of routes added for different proxy URLs, which gives flexibility in Infra management.

- While for sidecars, Envoy have only one route, and it will proxy to the app running on localhost.

- Configuring a sidecar proxy is pretty straight-forward, and configuration updated dynamically.

- Envoy allows DNS, which is easier to remember and any new service instances are added to the DNS dynamically.

- Envoy manages, observes and works best at L7.

- Envoy aligns well with Microservices world.

- Provides features such as resilience, observability, and load balancing.

- Envoy embraces distributed architectures and used in production at Lyft, Apple, Google.

How to adopt Envoy Proxy?

Develop application using Microservices architecture, not a Monolith. Now, for transparent communication, it's better not to add network functions inside the code, instead utilize Envoy for that. Firstly, deploy Envoy as Sidecar for a couple of Micro-services & test everything between them before deploying it cluster-wide for all Micro-services. Envoy used along with a Service Mesh such as Istio, which makes managing all Microservices a breeze. You can also explore more about ONAP in this insight.An infrastructure layer atom of all services, which handles communication between them. Click to explore about, Service Mesh Architecture and Best Practices

What are the best practices of Envoy Proxy?

Best Practices while implementing Envoy proxy for Microservices -- To take advantage of all features Envoy provides, whole Service Mesh should be set up, including Edge as well Sidecar proxies.

- Utilize and configure advanced patterns such as Circuit Breaking, Automatic Retries etc.

- Test deployments using Incremental Blue/Green Deploys Separate deploy from actual production release. At first, deploy new versions side-by-side without taking traffic. Then, try to shift 1% of traffic to the new version and check the metrics in Grafana dashboard. If everything looks good, try increments 10%, 50%, and finally 100 %.

- Create alerts based on Prometheus or Grafana metrics.

- Try to create a self-healing infrastructure.

- Run all Microservices on same port to maintain consistency.

- Envoy's listener configured to send requests on that port on each Microservices localhost.

What are the best Envoy Proxy tools?

The best Envoy Proxy tools are listed below:

- Ambassador API Gateway - Built atop Envoy to connect to various services from outside and used as Front Proxy.

- For Deployment purpose - Containers and Orchestration such as Docker and Kubernetes.

- For Service Mesh around all Microservices - Istio, uses a modified version of Envoy.

- Service Discovery - Envoy's inbuilt.

Conclusion

Developed at Lyft, Envoy now has a vibrant OS contributors community and is an official CNCF graduated project, along with Kubernetes and Prometheus. Envoy provides more visibility into the system. It's deployed as a sidecar along with service, there is no need to change application logic. Envoy allows Microservices to talk to each other in a transparent, elegant and resilient way and adds observability which is need of the hour for modern Cloud-native web applications. Envoy supported by Google, IBM, and a bunch of other big players.

- Discover more about Data Mesh and its Benefits

- Read more about AWS Serverless Computing

.webp?width=1921&height=622&name=usecase-banner%20(1).webp)