How does Angular compile and its performance?

The application can be compiled using two following commands:-

- ng-serve

- ng-build

The ng-serve and ng-build commands provide the development build by default and bundle all the files to a single file.

The similarities between these two commands are

-

Both commands compile the application and produce the development build by default. At this stage, compiled files don't undergo optimization.

-

Both commands bundle all the files present in a single file. Bundling is the process of combining all the files into a single file. By default, it produces five bundled Javascript files and the source map files. These files are embedded in an index.html file loaded by the browser.

A fast cluster computing platform developed for performing more computations and stream processing. Click to explore about, Apache Spark Optimization Techniques

The files which get generated are:-

-

Inline.bundle.js: It contains the script that is responsible for running the webpack.

-

polyfills.bundle.js:- It contains the script responsible for making the application compatible with all browsers.

- main.bundle.js:- It contains the code present in the application.

- styles.bundle.js:- It contains the styles used by the application.

- Vendor.bundle.js:- It contains the angular and includes 3rd party libraries.

The differences between the two commands are:-

-

ng-serve:- It compiles and runs the application from memory and is used for the development process. It doesn't write any files to the build folder. So It can't be deployed to another server.

-

Ng-build:- It compiles and produces the build files in an external folder. As a result, this created folder(build) is deployed in any external server. The value of the Output Path property present in the build section of the angular.json file decides the name of the build folder.

What are the types of compilations, and what is their descriptions?

It provides two modes of compilation:-

-

AOT(Ahead Of Time) Compilation

-

JIT(Just In Time) Compilation

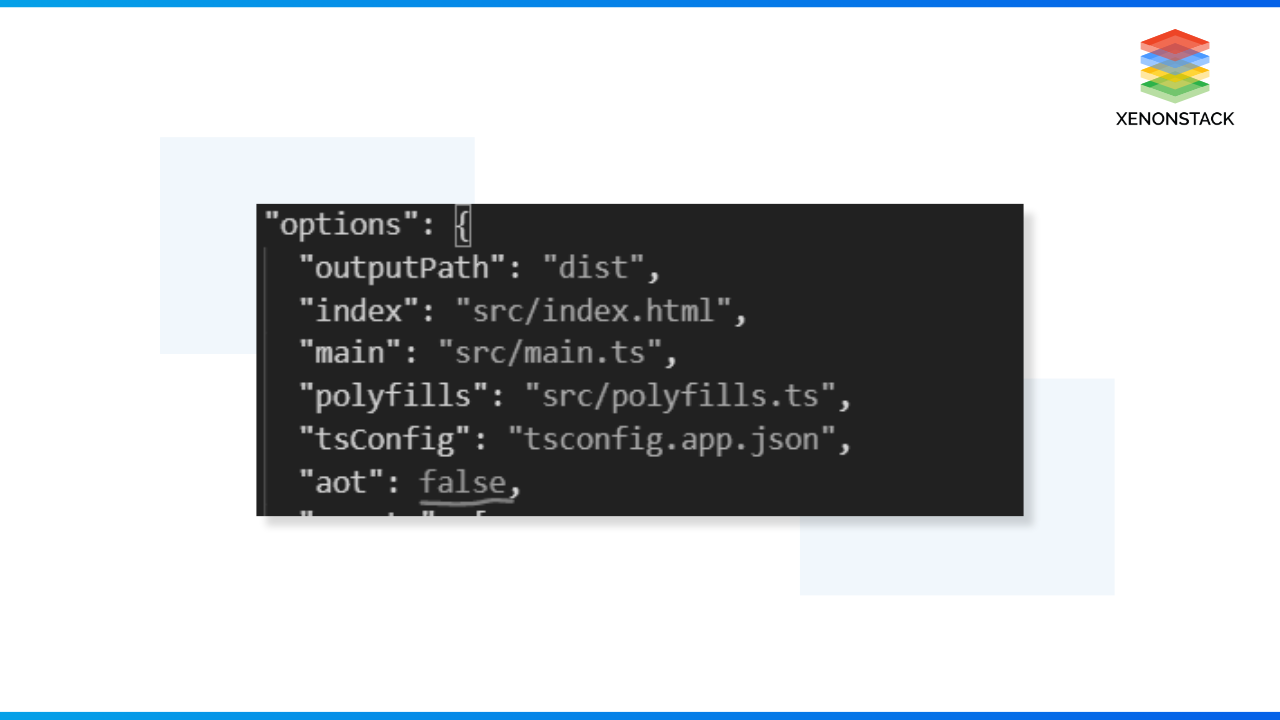

Until Angular 8, the default compilation mode was JIT, but from Angular 9, the default compilation is AOT. When we do ng serve, it depends on the value of aot passed in the angular.json file.

JIT

The JIT mode compiles the application in the application's runtime. The browser downloads the compiler along with the project files. The compiler is about 45% of the vendor.bundle.js file loaded in the browser.

The disadvantages of the JIT compiler are that:-

-

It increases the application size due to the compiler in the browser, which affects the overall application's performance.

-

The user has to wait for the compiler to load first, then the application, which increases the load time.

-

Also, the template binding errors are not shown in the build time.

AOT Compilation

In AOT compilation, the compilation is done during the build process. The compiled files are processed and bundled in the vendor. bundle.js file, which the browser downloads. The compilation is during the build time, so the size decreases by 50%.

The major advantages of the AOT compilation are:-

-

The application's rendering becomes faster as the browser downloads only the pre-compiled version of the application.

-

The application's download size is small because it doesn't include the compiler, which takes half its actual size.

-

Also, the template binding errors were detected during the application's build time. Hence, it helps to fail fast and makes the development process easy.

An open source distributed object storage server written in Go, designed for Private Cloud infrastructure providing S3 storage functionality. Click to explore about, Minio Distributed Object Storage

Why is Performance Optimization necessary?

It has now become the widely used framework for building business applications. The popularity or usage of any application depends on its performance. The faster and more reliable the application is, the higher its usage and customer satisfaction.

According to research, if the application's load time is more than 3 seconds, the user tends to drift away from the application and switch to different competitive applications. And this can be a big loss to the business.

Sometimes, the developer in the application's rapid development doesn't take care of the performance issues and follows terrible practices, which leads to poor performance. So, optimizing any application is necessary to improve the application's load time and increase performance.

How do we solve performance optimization issues?

The performance of any application plays a vital role in the growth of the business. As it is a high-performing front-end framework, we face challenges optimizing the performance. The major performance issues are the decline in traffic, decrement in engagement, high bounce rate, and crashing of applications in high usage.

The performance of any application can only be optimized by knowing the exact reason for or identifying the performance degradation issue. Some of the problems faced by the application are:-

-

Unnecessary server usage

-

Slow page response

-

Unexpected errors due to a real-time data stream

-

Periodic slowdown

Proper techniques can solve these problems. We should also ensure we have followed the best coding practices and clean code architecture. We (at Xenonstack) have improved the application's performance by following these.

A few other solutions to optimize the performance issues would be:-

-

Removing the unnecessary change detection in the app slows down the application

-

Adding the onPush at required places

-

Reducing the computation on the template side

Let's move ahead and focus on the more detailed optimization techniques.

Using React in application brings better performance and minimizes the number of DOM operations used to build faster user interfaces. Click to explore about, Optimizing React Application Performance

Methods of performance optimization techniques

The framework does its job well in providing the best performance. But, to develop a large-scale application, we must deal with many other factors. A few popular methods or techniques help us optimize the application better.

So, Let's start by going through the few methods in detail. These are a few essential hacks that can help us significantly alleviate the performance.

Using AoT Compilation

As we know, it provides two types of compilation:-

- JIT(Just-in-time)

- AoT(Ahead-of-time)

We also looked at the compilations working in the above section. Afterwards, the 8 version provides the AOT compilation, increasing performance. Because the JIT compiles the application in the runtime. Also, the JIT compilation bundles the compiler with itself, which increases the size of the bundler. Also, it increases the rendering time of the component.

However, AoT compilation compiles the application at build time, produces only the compiled templates, and doesn't include the compiler. Thus, the bundle size decreases, and rendering time increases significantly. Therefore, we should always use AoT compilation for our applications.

Using OnPush Change Detection Strategy

Before exploring the concept of change detection strategy, it is essential to understand what change detection is. Change detection is the process by which Angular updates the DOM.

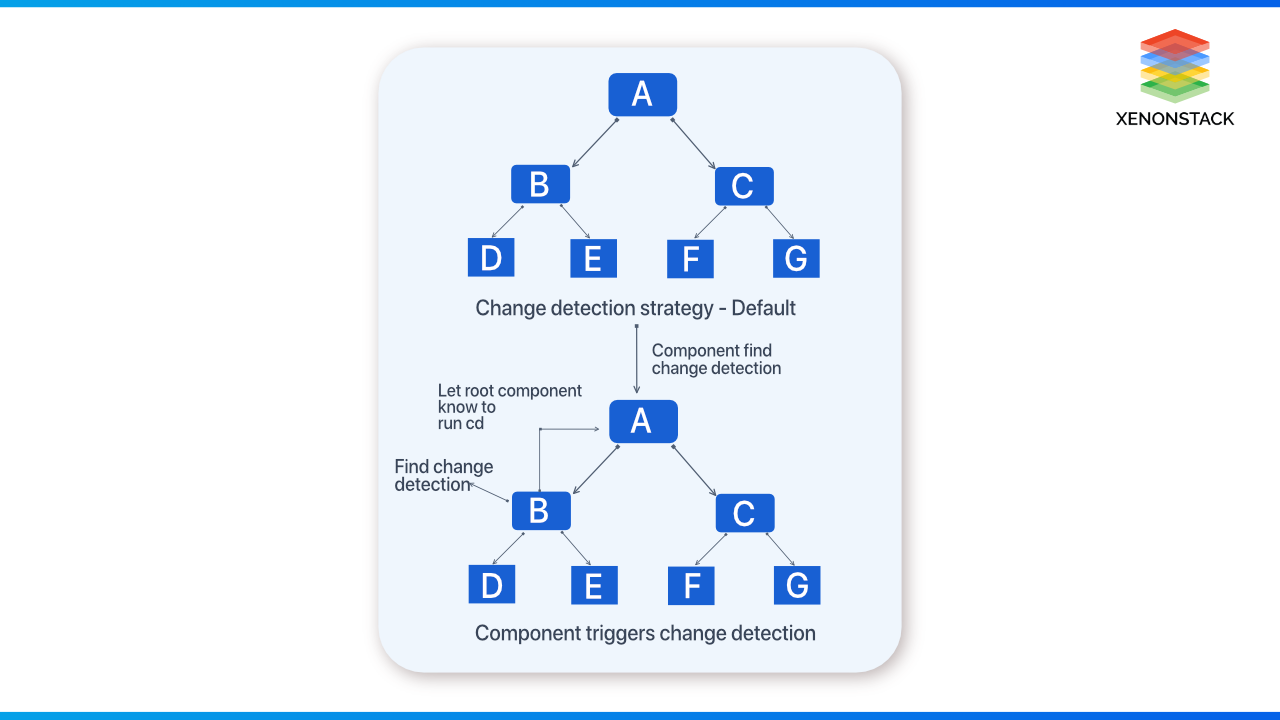

The change detection in the angular application looks something like the below diagram. It detects the changes in the application within the tree of components. It starts by checking the component's root, followed by the children and their grandchildren. The only change in component B makes the change detection run all over the components, as shown below.

In the case of Reference types, i.e., objects, whenever changes occur, Angular checks each property of the object to see if any property value has changed and updates the DOM accordingly. This affects the application's performance.

This can be controlled and fixed using the OnPush detection strategy. It tells Angular not to check each component whenever any change detection occurs.

The OnPush strategy makes our component smarter. It calls current component change detection only when the @input bindings change, and it only runs change detection for descendant components. Thus, this helps improve the application's performance.

After applying OnPush change detection to Components B and C, it triggers the tree's root to the bottom once the change detection happens on Component B. The root component changes the input property of B. So, using the OnPush change detection method significantly changes the application's performance.

A non-functional type of testing that measures the performance of an application or software under a certain workload based on various factors. Click to explore about, Performance Testing Tools

Using Pure Pipes

In Angular, pipes transform the data to a different format. E.g., the:- 'date | short date converts the date to a shorter format like 'dd/MM/yyyy.' Pipes are divided into two categories:-

- Impure pipe

- Pure Pipe

The impure pipes produce different results for the same input over time, while the pure pipes produce the same result for the same input. Only a few built-in pipes are pure. Regarding binding evaluation, angular evaluates the expression each time and applies the pipe over it(if it exists).

Angular applies a nice optimization technique: the 'transform' method, which is only called if the reference of the value it transforms is changed or if one of the arguments changes. It caches the value for the specific binding and uses that when it gets the same value. It is similar to memorization. We can also implement memorization on our own. It can be implemented like this.

Unsubscribe from Observables

Ignoring minor things in the development of the application can lead us to significant setbacks, like memory leaks. Memory leaks occur when our application fails to eliminate the resources that are not being used anymore. Observables have the subscribe method, which we call with a callback function to get the values emitted. Subscribing to the observables creates an open stream, which is not closed until they are closed using the unsubscribe method. For example, the global declaration of variables creates unnecessary memory leaks and does not unsubscribe observables.

It is always a good practice to unsubscribe from the observables using the onDestroy lifecycle hook so that when we can leave the component, all the used observables are unsubscribed.

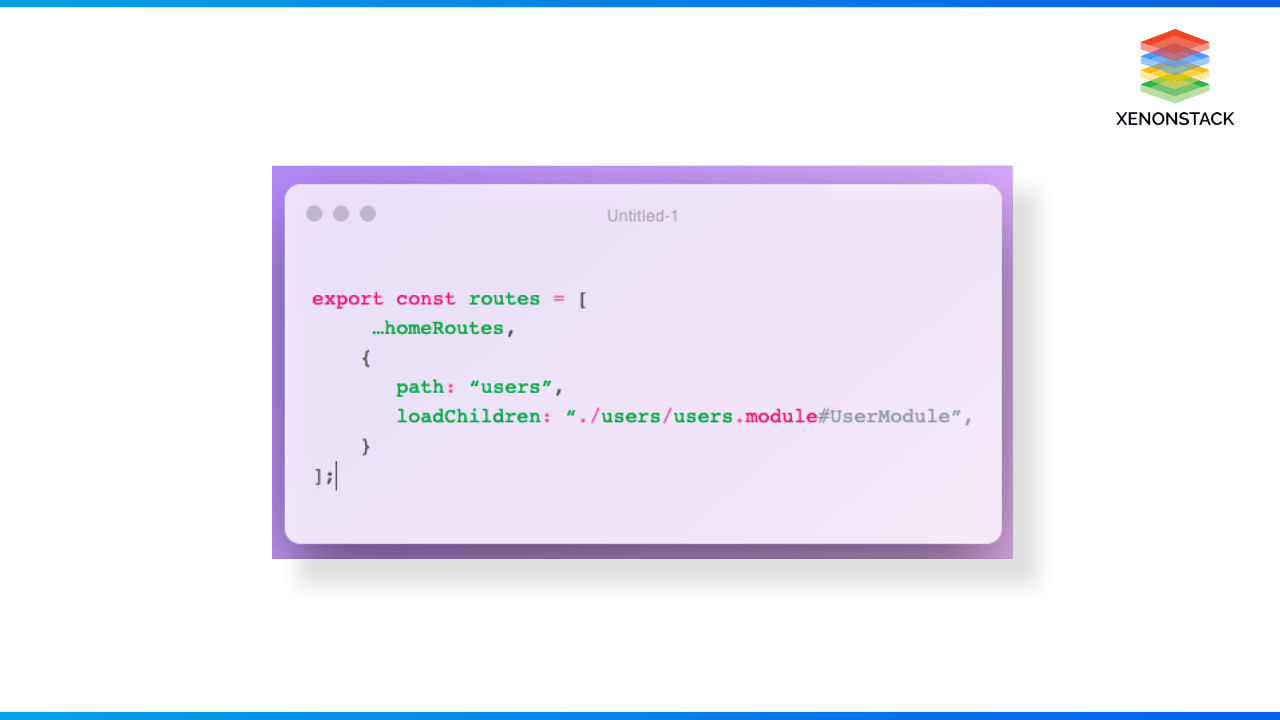

Lazy Loading

The enterprise application built using angular contains many feature modules. Clients do not require the client to load all these modules at once. With large enterprise applications, the application size increases significantly with time, and the bundle size also increases. Once the bundle size increases, the performance goes down exponentially because every KB extra on the main bundle contributes to slower:-

- Download

- Parsing

- JS Execution

This can be solved using lazy loading. Lazy Loading loads only the necessary modules at the initial load, reducing the bundle size and decreasing the load time. Other modules are only loaded when the user navigates to the created routes, greatly increasing the application load time.

Use tricky option for For Loop

It uses the directive to loop over the items and display them on the DOM(by adding or removing the elements). If it is not used with caution, it may damage its performance. Suppose we have the functionality of adding and removing the employees asynchronously. For example, if we have a large object containing a list of employees with names and email addresses, we need to iterate over the list and display the data on the UI. Then, the angular change detection runs for each addition and removal of data and sees if the object has some new value. If it finds, it destroys the previous DOM and recreates the DOM for each item again.

This has a huge impact on performance as rendering DOM is expensive. So, to fix this, the trackBy function is used. It keeps track of all the changes and only updates the changed values.

Avoid computation in template files

Template expressions are the most commonly used elements in Angular. We often need to perform a few calculations on the data we get from the backend, and to achieve that, we use functions on the template.

The bound function runs when the CD(change detection) runs on the component. The function must also be completed before the change detection and other codes move on.

If a function takes a long time to finish, it will result in a slow and laggy UI experience for users because it will stop other UI codes from running. It needs to be completed before the other code executes. So, template expressions must finish quickly. It should be moved to the great components file and the calculation beforehand if highly computational.

Usage of Web Workers

As we know, JS is a single-threaded language. This means that it can only run on the main thread. JS running in the browser context is called the DOM thread. When any new script loads in the browser, a DOM thread is created where the JS engine loads, parses, and executes the JS files on the page. Suppose our application performs any such task, including heavy computation at startup, like calculating and rendering graphs. In that case, it increases the application's load time, and we know that when any application takes >3 seconds to load, the user switches to another application. Also, it leads to a horrible user experience.

In such cases, we can use Web Workers. They create a new worker thread that runs the script file parallel to the DOM thread. The worker thread runs in a different environment with no DOM APIs, so it has no reference to the DOM.

So, using web workers to perform heavy computation and the DOM thread for light tasks helps us achieve greater efficiency and decreased load time.

The primary goal of performance tuning is to find bad parts /bottlenecks of code and to improve a bad parts/bottlenecks of codes. Click to explore about, Performance Tuning Tools and Working Architecture

Final Thoughts

Performance and load time play essential roles in any business. This post showed how using the right compilation methods and techniques can improve the application's performance. We saw how using change detection, lazy loading, and web workers helped us achieve great performance. Before using any of the above techniques, we must understand the reason behind the lagging performance. So, the right approach will help us to achieve our goal.

Next Steps with Performance Optimization Techniques

Consult our experts about implementing advanced AI systems and how industries and departments use Decision Intelligence to become decision-centric. Leverage AI to automate and optimize performance optimization techniques in Angular, improving efficiency and responsiveness.

.webp?width=1921&height=622&name=usecase-banner%20(1).webp)