Overview of Software Testing

Software testing is a process which is used for increasing the quality of a software or a product and for improving it by identifying defects, problems, and errors. It is the most important phase of the whole process of software development. Software testing aims at obtaining its intentions (both implicit and explicit), but it does have certain flaws. Still, testing can be done more efficiently if certain established principles are followed.

Without proper testing, none of the application’s stakeholders can be sure whether it works as expected or not. In spite of having constraints, software testing extends to control other verification techniques like static interpretation, model checking, and data.

An important process that ensures customer satisfaction in the application. Click to explore about, AI in Software Testing

So it is necessary to understand the goals, limitations, and principles of software testing so that the effectiveness of software testing could be increased. If the client gets their software without any testing and full of bugs and errors, he can stop or dismiss all contracts. This work examines an alternative procedure, the main purpose of which is to divide testing over all the lifecycle of software, starting from taking of requirements and continuing throughout development and maintenance stages. Such a thorough transmutation of the useful post-constructional testing venture turns it into something else but testing. Such a process stops from being a testing activity and becomes Test Driven Development.

What is Test Driven Development (TDD)?

Test Driven Development (TDD) is a programming practice which combines Test First Development (TFD) with refactoring. TDD involves writing a test for a code before the actual code, followed by the code to pass the pre-written test. It is a cyclic development technique. Each cycle coincides with the implementation of a small feature. Each cycle is said to be complete once the unit-tests rendering a feature, as well as all the existing reversion tests, pass. In TDD, each of the cycles starts by writing a unit test. This is followed by the implementation necessary to make the test pass and conclude with the refactoring of the code in order to remove any duplication, replace transitional behavior, or improve the design.

A key practice for extreme programming; it suggests that the code is developed or changed exclusively by the unit testing. Click to explore about, Test Driven Development Tools and Agile Process

TDD is a key practice of extreme programming, it prescribes that the code is developed or changed exclusively on the basis of the unit test results. The first developer creates the classes of the system together with the correspondent class interfaces. Then, the developer writes the test case for each class. Finally, each of the components is involved in the testing process, consisting of two activities: to execute the tests and, when some of them fail, to refactor the code in order to remove the bugs, duplicity & code smells which are supposed to be the cause of the failure. The process is said to be over when all the tests succeed. TDD can be compiled in the following three steps -

Step 1 - Write a failing test

Write a test for your program keeping in mind all the possible challenges it can face. As there is no code yet, it’s definitely going to fail.

Step 2 - Make the test pass

Write the minimum amount of code for your test, in order to make the test pass.

Step 3 - Refactor

Improve the code quality of your code without affecting the pre-written and new test results (i.e, already passed tests).

What is Acceptance Test Driven Development (ATDD)?

ATDD is short for Acceptance Test Driven Development. In this process, a user, business manager and developer, all are involved. First, they discuss what the user wants in his product, then the business manager creates sprint stories for the developer. After that, the developer writes tests before starting the projects and then starts coding for their product. Every product/software is divided into small modules, so the developer codes for the very first module then test it and sees it getting failed.

If the test passes and the code are working as per user requirements, it is moved to the next user story; otherwise, some changes are made in coding or program to make the test pass. This process is called Acceptance Test Driven Development.

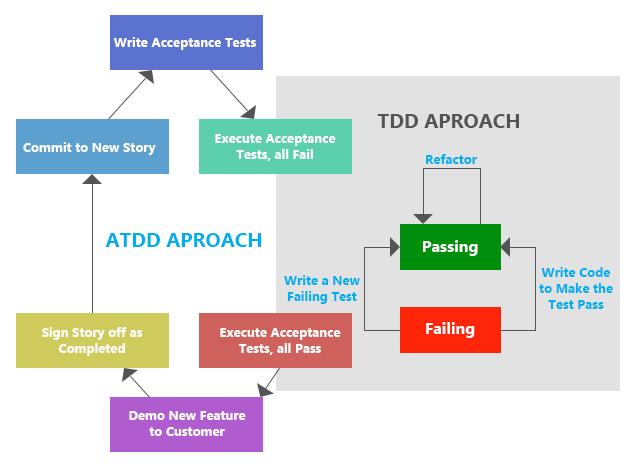

Acceptance Test Driven Development Workflow with TDD

Testing Levels For Acceptance Test Driven Development

The listed below are the testing levels of Acceptance Test Driven Development:

- Unit Tests

- Integration Tests

- System Tests

- Acceptance Tests

Automation testing is responsible for completing repetitive tasks with better accuracy and less time span. Click to explore about, Software Testing Automation Tools

Why we use Test Driven Development?

Test Driven Development (TDD) is not just a good programming practice but it also brings along a lot of benefits as well. Following are the advantages of implementing Test Driven Development to the code:- High test coverage is one of the benefits of TDD. As with TDD, the tests are written before the actual code, which enables refactoring the code easily.

- TDD enhances bug-free codes, as bugs are caught in the very start of the development of a program.

- Refactoring is done with confidence, as the implemented code is tested and verified by the continuous passing of the pre-written tests.

- It is easy to maintain the code as one can check anytime the functionality of the function and it helps to keep a track on your code and its functionality.

- It enhances you to write small test codes which are easy to debug and save you with the complexity of the code. As you know well when coding with React, the code grows in no time.

- You don’t end up with dead codes.

- Last but not the least, it makes you think before you code and gives you a pretty clear image of what are you trying to achieve from the program you are going to build.

Test Driven Development with React.JS

Test Driven Development has many benefits while building your code— one of the advantages of high test coverage is that it enables easy code refactoring while keeping your code clean and functional. If you are using React.JS for designing your UI part, you have realized that your code can grow very fast, as you create the React.js component and they have states, props, functions, and classes. And if you have used Redux with your React.js, and you are familiar with Dispatcher, Reducers, Actions, and Store, then you should need TDD for your React.js App to test each and every component to check whether your components are giving correct output or not, whether your dispatchers are dispatching actions correctly and storing your props data to store or not, and also, whether your states are changing as you want them to change or not.

A software development process which includes test-first development. Click to explore about, Test-Driven Database Development

To handle asynchronous calls in React.js you need middleware. Suppose you are using hundreds of APIs in your app, you will need to create a common middleware for all of the API requests. And you have to test your middleware with the testing tools that this is giving the correct response from the APIs, to store that API data into Reducers. In the ReactJS we use different testing tools, some for Unit testing, some for unit testing, some tools for reacting utility testing and some tools for test coverage results. As we all know React.js is developed by Facebook, their developers use Jest for javascript testing. We have described some tools below which one can use for their Reactjs App.

Unit Testing With Stub

A stub is an object with the predetermined behavior of a function. It replaces the function and uses a predefined data to answer the calls of the function during tests. Stubs provide recorded answers to calls made during the test, usually not responding at all to anything outside what is listed for the test. Stubs are used when we don’t want the real function to interact with the tests. Stubs can be useful for a number of tasks, some are listed below:- Replacing external calls which make the test slow and difficult to write.

- Triggering different components depending on the outputs of a single function.

- Testing unexpected conditions.

let stub = sinon.stub();

stub('hello_world');

console.log(stub.firstCall.args); //output: ['hello_world']

Unit Testing with Mock

The mock function makes it easy to test the links between code by cloning the function as a new fake object with all the passed parameters of the function. It doesn’t affect the actual implementation of a function and tests the links with the new fake object. We can also use Mock when we don’t want to invoke production code but at the same time, we want to verify that our code did execute. Mock is useful if you need to stub various functions from a single object. If not, the stub is much easier as you are just replacing a single function. Unlike the stub, mocks can define expected outcome from the test. Example, Let’s take a function which invokes a callback function every time the array is rendered.

function forEach(items, callback) {

for (let index = 0; index < items.length; index++) {

callback(items[index]);

}

}

const mockCallback = jest.fn();

forEach([0, 1], mockCallback);

// The mock function is called twice

expect(mockCallback.mock.calls.length).toBe(2);

// The first argument of the first call to the function was 0

expect(mockCallback.mock.calls[0][0]).toBe(0);

// The first argument of the second call to the function was 1

expect(mockCallback.mock.calls[1][0]).toBe(1);

Setting up Environment for Test Driven Development

When starting with testing, the most important thing we need to do is set-up environment for our tests. We require an environment for writing and running our tests. We basically need an assertion library like Chai and a testing framework like Enzyme, Jest or Mocha for the implementation of our test codes and check whether the tests are working as required or not. We can use any one of the testing frameworks from Enzyme, Jest, Mocha, Istanbul etc. It is generally preferred using Jest for unit testing as it contains almost all the required tools for performing a unit test on a component and also facebook uses jest for all its javascript codes. You need to install Node.JS to provide a runtime environment for your javascript code and also NPM, as we use NPM packages for our test codes. Here are the steps for setting up the environment - Installing packages for your tests. Syntax -$npm install --save-dev jest

$npm install --save-dev enzyme

$npm install --save-dev istanbul

//package.json

{

"name": "demo",

"version": "0.1.0",

"private": true,

"dependencies": {

"react": "^16.1.1",

"react-dom": "^16.1.1",

"react-scripts": "1.0.17"

},

"scripts": {

"start": "react-scripts start",

"build": "react-scripts build",

"test": "react-scripts test --env=jsdom",

"eject": "react-scripts eject"

},

"devDependencies": {

"enzyme": "^3.2.0",

"istanbul": "^0.4.5",

"jest": "^21.2.1"

}

}

//test.js

describe('Addition', () => {

it('should know 5 + 4 equals 9 ', () => {

expect(5 + 4).toBe(9);

});

});

What are the best Test Driven Development Tools?

The below highlighted are the Test Driven Development Tools and Unit testing types:

Unit Testing React App with Jest

Unit testing is the test which is done on components to validate the functionality of the component. Unit tests are independent (free from dependencies). A number of tests can be performed on a component, from its rendering to the final expected outcome from the component. Unit tests should be done on the functionality of a particular function and assume the behaviour of the surrounding components. The unit test should be simple, easy implementation of code and should easily and quickly run, expecting a quick response. Small unit tests compile to a number of unit test for the component and more the number of tests, more the bugs are caught, which results in better code quality.

What is Jest?

Jest is an open-source testing framework. It runs tests within a fake DOM in the command line. It supports the creation of mock function and provides a good set of matches that makes assertions easy to understand. Jest also provides a new feature called Snapshot Testing, which saves the output of the rendered component to a file and compares the file with a snapshot of the succeeding rendering components. This helps us to know if our component is still on track or has diverted from its expected behavior. For using Jest unit test in the React.js app, Jest has to be installed with the following command: $ npm install --save-dev jest And add the following line in your package.json file.

{

"scripts": {

"test": "jest",

"test:watch": "npm test -- --watch"

}

}

//test.js

describe('Addition', () => {

it('should know 5 + 4 equals 9 ', () => {

expect(5 + 4).toBe(9);

});

});

Unit Testing React App with Enzyme

The Enzyme is an open-source JavaScript Testing utility, maintained by Airbnb for React, that makes it easier to assert, manipulate, and traverse your React Component’s output. React is becoming increasingly popular and widely-used JavaScript library for developing web applications & components based library. The enzyme is used for testing a component as a unit, based on the component structure, manipulation & traverse components output. If you like to use enzyme with custom assertions and convenience functions for testing your React components, you can also work with other frameworks like -- chai-enzyme with Mocha/Chai.

- jasmine-enzyme with Jasmine.

- jest-enzyme with Jest.

- should-enzyme for should.js.

- expect-enzyme for expecting.

Enzyme API

Enzyme API focuses on rendering the react component and retrieving specific nodes. There are three ways to render a component.Shallow Rendering

Shallow rendering can be used while writing unit tests for React. Shallow rendering is used to constrain yourself to test a component as a unit and state points about what its render method returns, without bothering about the behavior of child components, which are not instantiated or rendered and ensure that your tests aren't indirectly asserting on the behavior of child components. Import : import { shallow } from 'enzyme'; shallowRenderer() is used to render the component you’re testing, and from which you can extract the component’s output. shallowRenderer.render() is comparable to ReactDom.render() but it doesn’t require DOM and only renders a single level deep. This means you can test components that are isolated from how their children are implemented.Full Rendering

Unlike shallow or static rendering, full rendering is used where you have components that need to interact with DOM APIs, or require the full lifecycle in order to test the component fully, for example, componentDidMount(). Full DOM rendering expects that a full DOM API, which means it should run in an environment that looks like a browser environment. If you do not want to run your tests in the browser environment, then you can use Mount, which is dependant on a library called jsdom. Full DOM rendering actually mounts the component in the DOM, so that the tests can affect each other if they are all using the same DOM, so writing your tests and, if necessary to use .unmount() or something similar as cleanup. Import : import { mount } from 'enzyme'Static Rendering

Static Rendering uses render( ) function to render react components to static HTML rendering and analyzing the output. Render returns the output similar to the other enzyme rendering method like mount and shallow. However, static rendering uses a third party library Cheerio for parsing and traversing HTML. Import import { render } from 'enzyme'; Exampleimport React from 'react';

class Demo extends React.Component {

render() {

return ( < div className = "demo" >

< span > Demo Component < /span> < /div>

);

}

}

export default Demo;

//test.js

import React from 'react';

import {

shallow

}

from 'enzyme';

import Demo from '../demo';

describe("A suite", function() {

it("should render the Demo Component", function() {

expect(true).toBe(true);

expect(shallow( < Demo / > ).contains( < span > Demo Component < /span>)).toBe(true);

});

});

Istanbul - A Javascript Code Coverage Tool

Istanbul is a Javascript code coverage tool written in JS. It is used as a command line tool as well as a library. It can be used as an escodegen code generator. It is used for Reporting HTML and Console as well. It tracks statement, branch and function coverage giving results in line by line with 100% fidelity. Istanbul is JS code with line counters so that you can track how well your unit-tests exercise your codebase. Istanbul.js can be easily implemented with react testing modules like Mocha, TAP, AVA. For using Istanbul test coverage in React.js app, you have to install the nyc with the following command. $ npm i nyc --save-dev And add following line in your package.json file.{

"scripts": {

"test": "nyc mocha"

}

}

Redux Unit Tests

There are two types of Redux unit tests listed below:

What is Redux Action Tests?

Action tests using chai assertion library for unit testing the actions for the display features.//action.test.js

import * as actions from '../../actions/display';

import * as types from '../../constants/DisplayType';

import chai from 'chai';

let expect = chai.expect;

describe('actions', () => {

it('should create an action to change value of selected content', () => {

const value = "Item";

const expectedAction = {

type: types.SELECT_ITEM,

selectItem: "Item"

}

expect(actions.itemSelect(value)).to.deep.equal(expectedAction)

})

it('should create an action to change index of the selected item', () => {

const value = "2";

const expectedAction = {

type: types.SELECT_INDEX,

selectIndex: "2"

}

expect(actions.indexSelect(value)).to.deep.equal(expectedAction)

})

it('should create an action to open ad close notification menu', () => {

const value = true;

const expectedAction = {

type: types.OPEN_NOTIFICATION,

NotificationOpen: true

}

expect(actions.isNotifyOpen(value)).to.deep.equal(expectedAction)

})

})

What is Redux Reducer Tests?

Reducer tests using chai assertion library for unit testing the reducer for the display features.import displayReducer from '../../reducers/displayReducer';

import * as types from '../../constants/DisplayType';

import chai from 'chai';

let expect = chai.expect;

describe('actionReducer', () => {

it('should return initial state', () => {

const expectedAction = {

selectedItem: 'default',

selectedIndex: '1',

isNotificationOpen: false

}

expect(displayReducer(undefined, {})).to.eql(expectedAction)

})

it('should return boolean value for notification open and close', () => {

const value = true;

const expectedAction = {

isNotificationOpen: value

}

expect(displayReducer([], {

type: types.OPEN_NOTIFICATION,

NotificationOpen: value

})).to.deep.equal(expectedAction)

})

})

Summarizing Test Driven Development

In the end, it is safe to say that Test Driven Development must be adopted by as many developers as possible, in order increase their productivity and improve not only the code quality but also to increase the productivity and overall development of software/program. TDD also leads to more modularized, flexible and extensible code.How Can XenonStack Help You?

XenonStack follows the Test Driven Development Approach in the development of Enterprise level Applications following Agile Scrum Methodology.

Visual Analytics Services

Turn your data into a graphical representation with Visual Analytics Services. Visual Analytics with Javascript helps you understand trends by analyzing and explaining data in real-time. Unlock the potential of your Big Data with interactive Visual Analytics. Our highly skilled Statisticians helps you analyze and decipher your data to present it visually in form of images, graphs, charts to discover new opportunities and improve decision making.

Interactive UI Development

Build Interactive UI and Dashboard by applying the principles of functional reactive programming. At XenonStack, we use Google's Material Design components in the React ecosystem to build Dashboards. React facilitates the creation of reusable high-performance UI components that can be composed to form modern web UIs.

Data Visualization Services

Discover hidden insights in your data with Data Visualization. Provide a Visually Appealing and Informative BI Analytics with Data Visualization Tools like Tableau, PowerBI. Transform your data into intelligent insights with Predictive Modelling using Data Mining.

- Discover more about Reactive Programming Solutions

- Read More about System Testing Types, Best Practices and Tools

.webp?width=1921&height=622&name=usecase-banner%20(1).webp)