What is Google BigQuery?

Google BigQuery is a cloud-based Infrastructure as a Service model designed by Google which is used for storing and processing massive data sets with the help of several SQL queries. It can be said that BigQuery is a type of database which is different from the transactional databases like MySQL and MongoDB. Although we can use BigQuery as a transactional database the only problem we will be facing would be that it would take more time for executing the query.

What is Google Datalab?

Google Datalab is a powerful interactive tool which is created for visualizing, exploring and transforming the data and to build several machine learning models on Google Cloud Platform or simply GCP. It easily connects to multiple cloud services to provide the main focus on data science tasks only.

A fully managed database service helps to set up, manage, and administer your database in the cloud and manage and also provide services for hardware provisioning and Backup. Click to explore about, Types of Databases with Benefits

Why Choose BigQuery?

One of the main perks of using Google Cloud Platform (GCP) is having google BigQuery. So, the great thing about BigQuery is that with the help of BigQuery we can quickly scan millions and billions of rows of a table in some seconds only. Apart from the speed of executing billions of rows, it also takes care of infrastructure management. Queries which used to take several hours for executing can be completed in some seconds only. This is all happened with the help of Google BigQuery only. If looking at the market, there are an infinite number of solutions for the same thing, but which one is the best it depends on the use cases. There are several needs of an organization due to which they use BigQuery. Some of them are as follows -

Provides a Managed Solutions

Here managed solutions meant to be a managed infrastructure which is entirely provided by Google BigQuery. So, the prime focus would be building a product, making it properly and building it fast. It stops spending a significant amount of engineering effort and time.

Keeps the Cost Contained

As it is a fact that none of the organization wants to pay more than it is necessary. As, it is a cloud-based service, so the user has to pay only that amount of money that is in use, i.e., a pay per use basis or we can say that it provides flexible pricing.

Ability to Greatly Scale up and Manageable in a Small Amount of Time

In BigQuery, fully manageable means that there is no need to take care of the Infrastructure and the database administration by the developer. There is also no need to think about the deployment of the clusters and while scaling the data and even not to think about how to configure compression or how to set up disk while scaling. All these things are taken care of by the BigQuery itself.

Availability and Reliability

General purpose of using any service is that data should always be available. So, in BigQuery data is still available as it is replicated on multiple data centers. BigQuery itself replicates the data between several zones to maintain the proper availability of data. It not only replicates the data to different data centers but also provide load balancing among various data centers.

Big Data is nothing but large and complex data sets, which can be both structured and unstructured. Click to explore about, Open Source Big Data Tools

Why Choose Google DataLab?

For data visualization and transformation, we can use other tools, but with the help of datalab, we can run the query, look at the output and also update the documentation. One of the best tools for transforming and visualizing the data is Google Datalab. Apart from data visualization, there are several other things due to which Google datalab had come into existence, and some of them are as follows -

Capability to Scale up

It does not matter whether we are analyzing petabytes or terabytes of data, it can quickly scale up, up to as much amount of data as required. There is also no need to take care about how to configure the compression; it all takes care by the datalab.

Machine Learning with Life cycle Support

Provides a proper machine learning support, as it explores data, build and evaluates the machine learning models with the help of a library, i.e. TensorFlow or Cloud Machine Learning Engine.

Data Management and Visualization

Google Datalab usually interactively transform, explore and visualize the data with the help of BigQuery or also with the help of cloud storage. So, here the management of data is taken care with the help of Google BigQuery. Therefore no need to think about the management of data.

Multi-language Support

Nowadays everyone is using different languages, so it is essential for any platform to support different languages. As currently, the languages supported by cloud datalab are Python, SQL and JavaScript(for BigQuery user-defined (functions).

How to Use BigQuery?

With the help of web UI in the (Google Cloud Platform) GCP console, we can easily use BigQuery as a visual interface for running the tasks like implementing the queries, importing data and exporting the data. For implementing BigQuery in different ways, we have to follow the steps mentioned below -

Query a Public Dataset

For querying in a public dataset, the web UI provides an interface to query tables which also includes the public dataset which is offered by the BigQuery itself. For querying data in the public dataset, we have to perform the following steps -

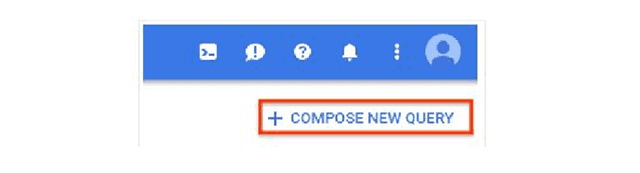

- Go to the BigQuery web UI in the GCP console. Explore here the link for the BigQuery UI.

- Click on compose new query button.

- Write the query which you want to implement. iv. After writing the query validate the query by the query validator.

- If the checkmark becomes green, it means there is no error and then runs the query.

Creating a Dataset

We can create the dataset in the web UI for storing the data, and for creating the dataset, follow the given steps -

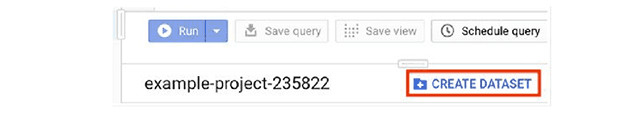

- Again goto the web UI in the GCP console. Access BigQuery UI here.

- In the navigation panel, go to the resource section and create a new project and give the name to it.

- After that create the original dataset by going to the details section and click on the create dataset button. By clicking to the create dataset button, it will ask for several fields.

- While creating a dataset, enter proper dataset ID and data location. Currently, the public datasets are stored in US location. v. After that leave all the fields as default and click on create a dataset, and the dataset will be created.

Combining BigQuery and DataLab

Google BigQuery is like a helping hand for querying on a large data set. It is fast when performing SQL queries on a large dataset. We can either use Datalab and BigQuery for the faster database queries with the help of SQL like syntax. For using the google datalab and BigQuery, make sure that -- You must have signed in to a google account.

- Google compute engine virtual machine has been created and currently active.

- APIs related to machine learning and dataflow has been enabled.

- Must have an active project and an active notebook.

A Centralized Approach

Building a highly-scalable, and cost-effective Strategy for data management helps Enterprises to Increase business efficiency.For making strategic decisions based on data analysis we advise taking the following steps- Get an Insight about Google Analytics

- Click to explore about How DevOps on GCP Works?

- Learn more about XenonStack Google Cloud Solutions

.webp?width=1921&height=622&name=usecase-banner%20(1).webp)