Overview of Building Advanced Analytics Platform

-

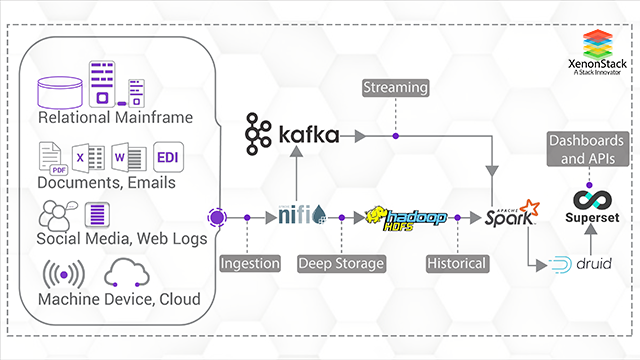

- Building an analytics platform involves the collection of data from API of various sources followed by integration of data into the application, ability to deal with an abundant chunk of data.

-

- Secondly, build and plan for storage of data for constant updations.Processing the data turning the collected raw data to relevant information further visualizing the data and performing analytics to develop applications.

Challenge for Building Real Time Platform

- Predictions at Real-Time

- Handle large volume of data

- Real-Time data Monitoring and Security

- Quality and Storage of Data

- Data Integration and Analytics

Solution Offerings for Advanced Analytics Platform

Build an Advanced Analytics Platform for Oil and Gas Industries from various types of data sources. Buy a subscription for FTP Server comprising of EBCDIC files every month for Oil and Gas for Texas State. Get Historical Data and store in SQL Server.Data Collection from Batch Data Sources -

SQL Server and FTP Server are two Batch Data Sources having EBCDIC files. Apache Beam used on Cloud Data Flow for collecting data from both data sources and store to Data Lake.Data Collection from Streaming Data Sources (Google Pub-Sub) -

Get data from Sensors attached to Oil & Gas refinery Machines. Deploy Python Code inside the Sensor for writing various states of Machines to Google Pub-Sub. Google Pub-Sub used as Real-time Data Stream.Predictive and Other Advanced Analytics -

Build and train models using the Data in BigQuery and run ML and DL Algorithms on incoming data to achieve targets.Building Model using Deep learning algorithms

- Explore Data and Launch Cloud Datalab

- Invoke BigQuery and Draw graphs in Cloud Datalab

- Create a Sampled datasets, Clone repository and Run Notebook

- Create and develop a Tensorflow Model (Wide and Deep) in Datalab on a small sampled dataset.

- Preprocess data at scale using Cloud Dataflow for Machine learning.

- Train on Cloud ML Engine, tune hyperparameter and create the model.

- Deploy a trained model as a Microservice to make Real-Time and Batch Prediction.

- Deploy a Model web application that consumes the Machine Learning service.

- Implement the model on the data, transfer and use on Cloud BigTable.

Overview and Applications of Predictive Analytics

Predictive Analytics follows a Proactive Approach composing of Statistical techniques and models. Prediction refers to a warning signal before the occurrence of the event. Predictive Analytics involve Decision Making, Right Collection of Data, Creation of Model, Applying Real-Time Analytics and Predictions, at last Deploying the application. Predictive Maintenance along with IoT is capturing the Markets, Enterprises deluging with ample and excellent Business Applications. Predictive Analytics involves analyzing data to identify unknown risks and failures, making applications secure. Predictive Analytics drive decisions and actions in Real-Time. Real-Time Applications of Predictive Analytics involve -- Weather Forecasting

- Risk Management

- HealthCare AI applications

- Disaster Management

- Fraud Detection

- Cyber Security

.webp?width=1921&height=622&name=usecase-banner%20(1).webp)