Introduction

Interpretability of models is very important to make AI systems more productive and gain user's trust. Akira AI is Explaining its whole AI system from Data collection to model monitoring using Explainable AI. It explains each user type from the end customer to the developer to understand and know the reason for each output. Here we will discuss how Akira AI explains its models and Algorithms from model comparison to the performance of selected models.

Algorithm and Model Explanation

Here we will discuss step by step explanation of the model to make AI systems more productive and help the developer to understand and know the reason for each output:

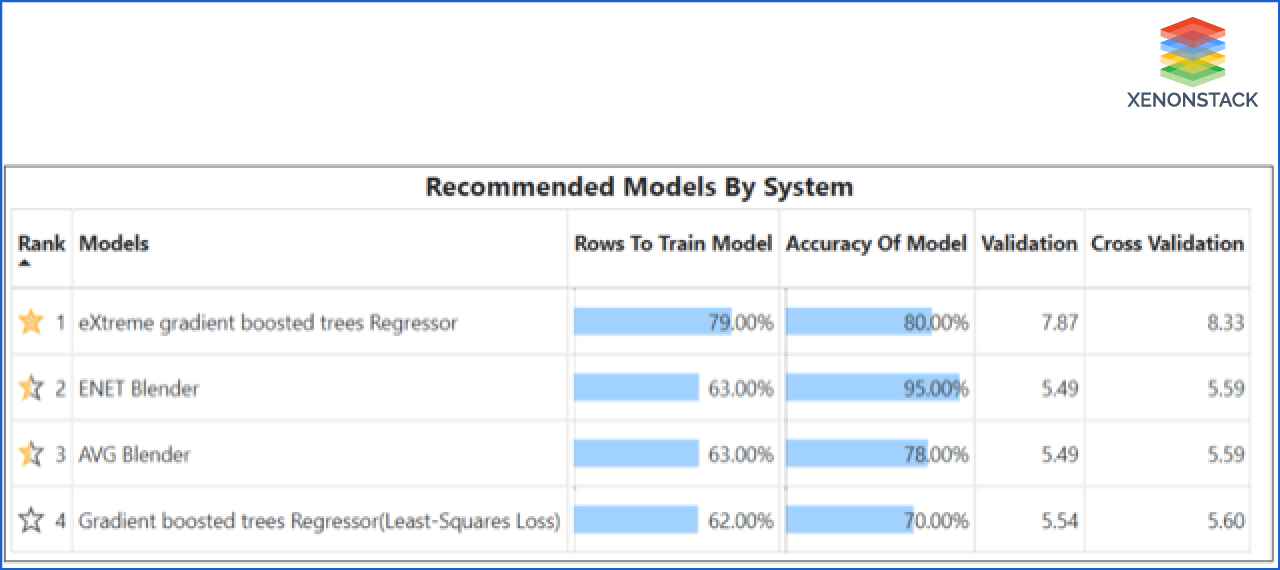

Recommended Models by System

There are various models that we can use to solve our problem. We can use any one of them. It is important to select the right model if we want our system to work correctly and efficiently. So this dashboard shows the top model and some of the attributes that explain why the system chose them as top models.

- This dashboard is showing the top-recommended model by the system. Models are arranged in such a way that the system is recommended to use the topmost model.

- This dashboard also shows the model's attributes, such as accuracy, trained data, and RMSE.

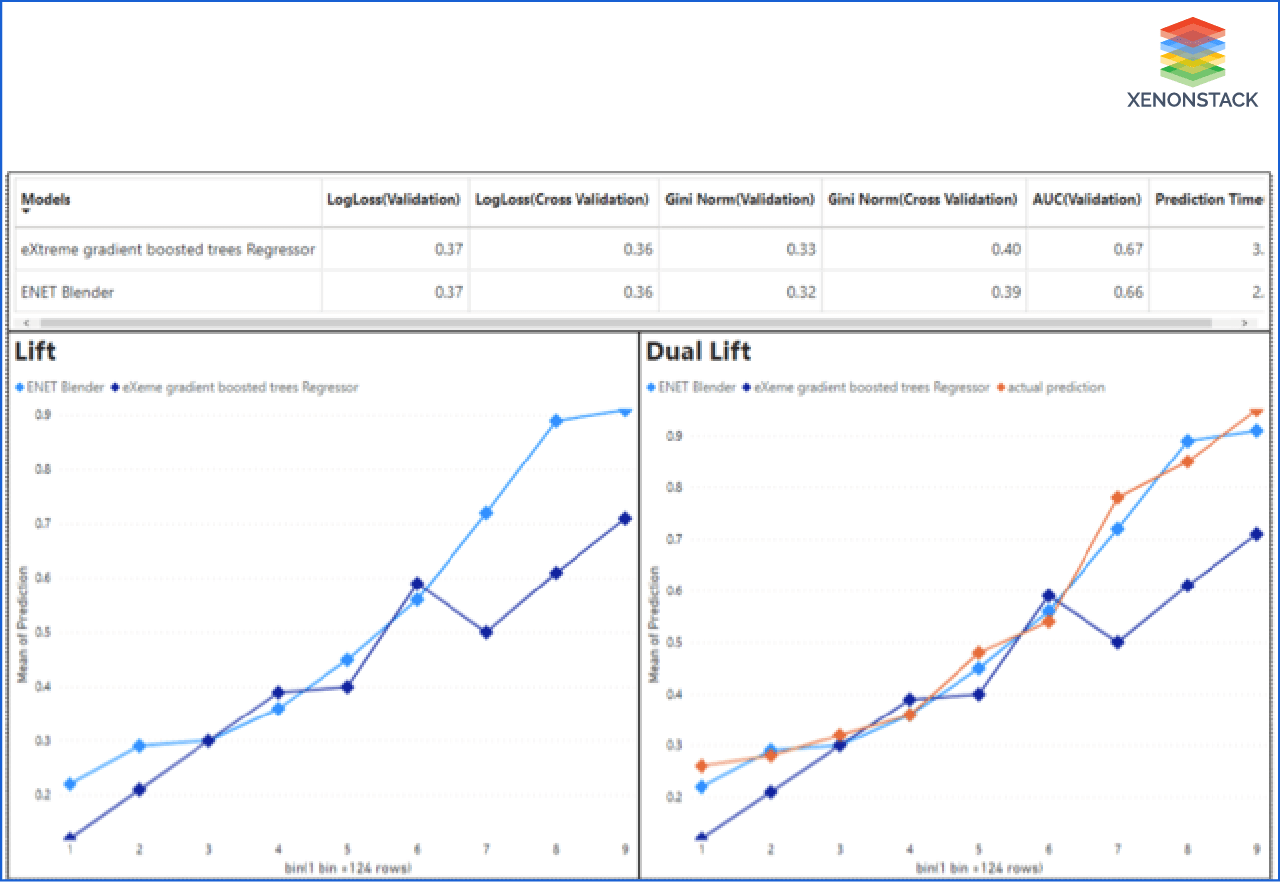

Model Comparison

In the previous dashboard, the system is recommending models. But there will always be a question in users why the system is recommending these some models at the top from those hundreds of models. In this step, Akira AI compares models on various attributes to why the system recommends that model.

- In this dashboard, models are comparing each other based on various parameters.

- This dashboard shows LogLoss, Gini Norm, AUC, and Prediction Time value of models selected by the user.

- This dashboard also shows two charts, Lift and Dual lift, to compare which model performs better.

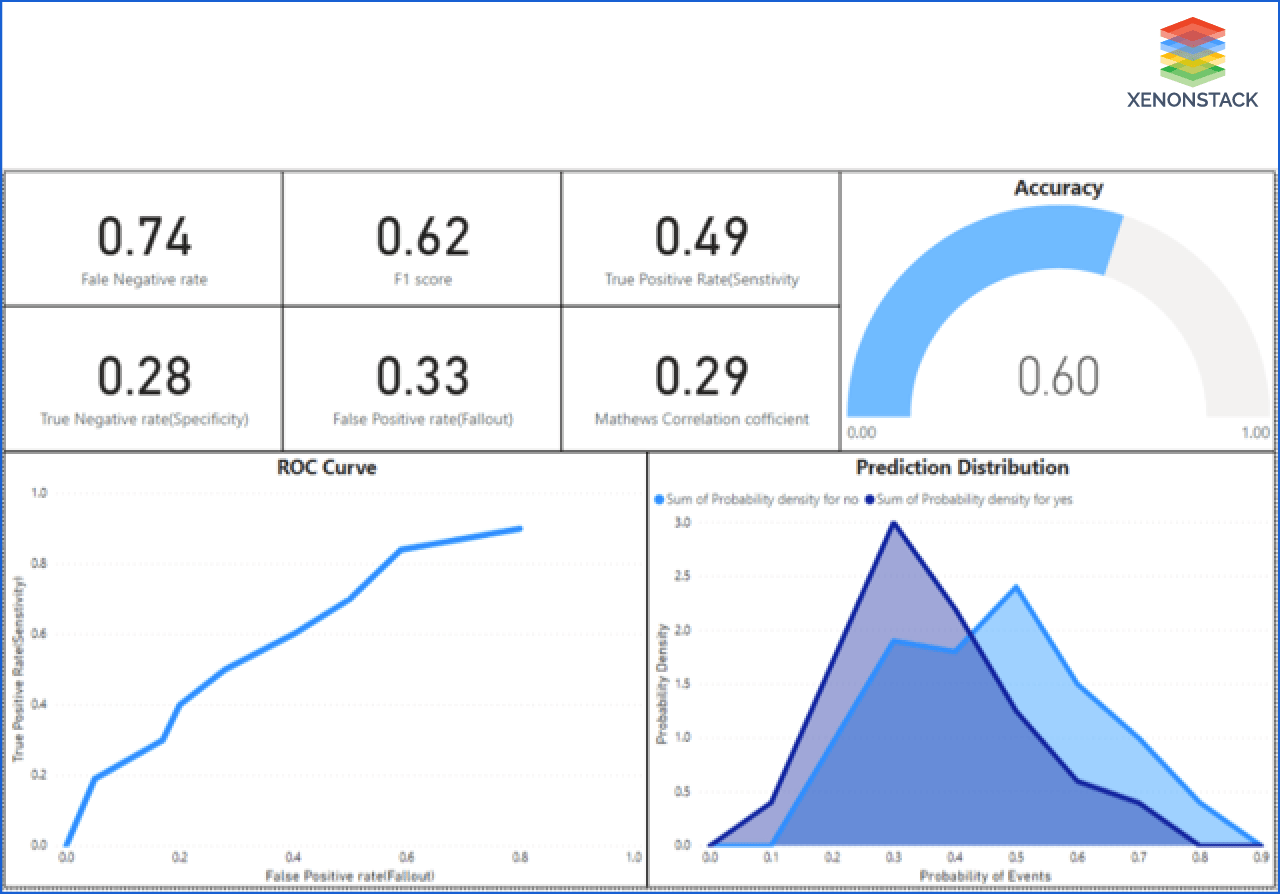

Performance of Selected model

In the previous dashboard, Akira AI compares two models on the basic attributes. Users can also analyze the model deeply. It explains the selected model.

- This model explains various performance checking attributes of the single model, such as accuracy, ROC curve, Prediction distribution, and confusion matrix.

- This model also shows the model's confusion matrix from where the person who has some knowledge about ML and the model can get to know the model performance.

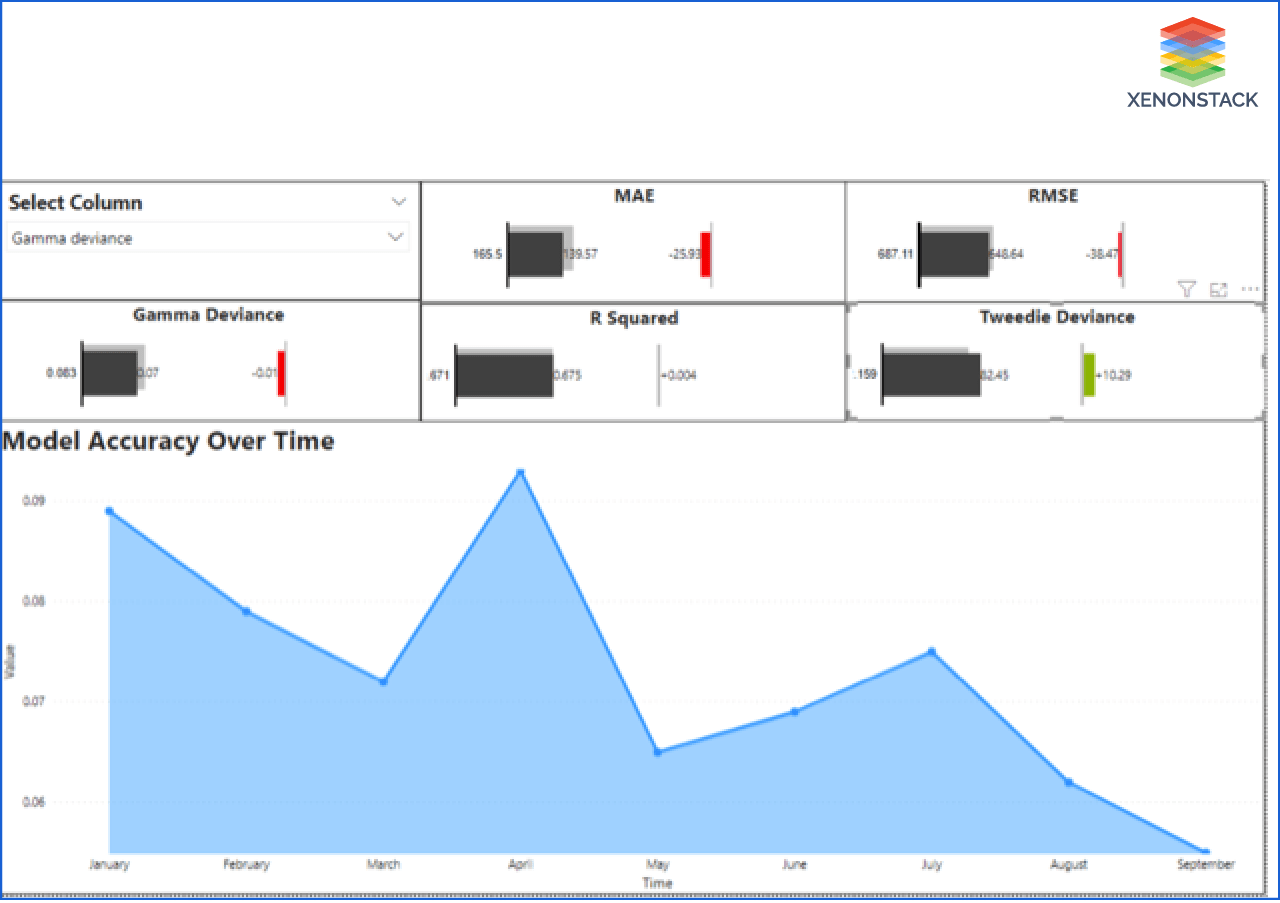

Performance of Used Model

In this step, Akira AI explains the model's performance that is used for prediction. The user gets to know if the model is working correctly or not, and if there is any flaw in model performance, they can take further decisions accordingly.

As this can also be possible that the model is working fine now, but there is a possibility that it can perform not up to the mark in the future. Therefore Akira AI is also providing the dashboard for continuously managing and tracking model performance.

This model shows how RMSE, MAE, Gamma deviance, R Squared, and Tweedie Deviance change from previous.

This dashboard is also showing the change of values over time to track all individual attributes' performance. Users can select any of the attributes. The dashboard is showing the RMSE of the model over time. From here, we get to know the value of RMSE is decreasing with time. The performance of the model depends on the RMSE values. As the value of RMSE decreases, it means models become more fit than before.

What are the feature of Model Explanation?

- Akira AI provides us with a clear and understandable explanation using visualization for recommending selecting models for an AI system. By just looking, users can easily identify which model is good to use for a particular AI system.

- It provides in-depth dive information on attributes on which a model depends that developers always use to track the model's quality while predicting the output.

- It provides trends and accuracy of various model attributes over time so that a deployer or user gets to know the particular time when a model lacks its performance.

-

Users can also get to know the model's wellness from the same dashboard with time and can decide whether they need to change the model.

Explainable AI (XAI) solutions aid in characterizing model accuracy, fairness, transparency, and outcomes in AI-powered decision making. Taken From Article, AI Principles and ModelOps Solutions

Conclusion

Akra AI provides complete interpretability of their model and performance from which users can analyze the model. It provides the values of various factors in the dashboard to measure the model working, making it easy to find the problem and solve it accordingly. Performance measuring factors can vary according to the model.

-

Discover here about Data Preparation Roadmap

-

Read more about MLOps Challenges and Solutions

.webp?width=1921&height=622&name=usecase-banner%20(1).webp)